I’m going to be on holiday from Dec. 23 until Dec. 30 — so, no more postings during that time, I expect … unless something really extremely weird happens that requires blogging!

Okay, so, anyone who sees really extremely weird things, send them in and entice me to break my fast.

Well, not exactly Gollum. But Andy Serkis, the guy who plays Gollum in The Two Towers, did a lot of acting to produce the part — including wearing a motion-capture suit, and even motion-capture dots on his face, so that Gollum’s creepy-ass hopeful smiles and grimaces are, in fact, Serkis’. There’s a great piece by my friend Justine Elias in today’s New York Daily News about how the magic works:

During principal photography, Serkis, dressed in a neutral-colored suit, was on location, acting alongside Frodo (Elijah Wood) and Sam (Sean Astin). “We shot two versions of every scene, one with me in, and one with me out, standing off-camera and saying my lines,” says Serkis. “If Peter [Jackson] liked what I’d done, he told the animators to use my movements and paint over, frame by frame, exactly what I’d done.” So when Gollum attacks Sam or pulls Frodo out of a swamp, the water or dust kicked up is the real thing.

An ancillary question is — can anybody get nominated for an Oscar for such a performance?

“I’d compare Andy’s work to John Hurt’s performance in ‘The Elephant Man,’” says Mark Ordesky, one of the “Ring” producers. “Hurt was barely recognizable under all that makeup, but he gives a brilliant performance. Our animators captured Andy’s every facial tic, every tiny gesture — the computer effects are almost like computer-generated makeup on his face.”

Arianna Huffington goes nonlinear in this great story on Salon about the misuse of apostrophes. You’ve all seen these type of mistakes, of course — using an apostrophe to pluralize a noun. And you all know (god, I hope so, anyway!) that this is agrammatical. But when Huffington gets into a big fight with her daughter over this, her daughter refuses to believe that using an apostrophe to pluralize isn’t acceptable, because everyone does it that way: Advertisers, other kids at school, and even — remarkably — the teachers at her kid’s school.

But here’s the rub. Huffington spies a pluralizing apostrophe in the New York Times, and the lid flies off:

Things only got worse the next morning when, while reading the New York Times, I came across not one, but two examples of apostrophes being put in the wrong place — including one in a column by my hero, Paul Krugman. In writing about inherited wealth, the erudite Princeton professor made mention of “Today’s imperial C.E.O.’s.” Isabella’s words echoed in my brain: “This is how everyone does it here.”

Flummoxed, I got ahold of the New York Times’ manual of style and, to my horror, discovered that the paper’s rash of apostrophe errors had not been the result of sloppy copy-editing but a conscious executive decision to ignore the rules of proper punctuation.

Is it possible that grammar is going to change? That the apostrophe will eventually become so commonly misused for pluralization that it’s eventually accepted as grammatically correct? After all, grammar is just an agreement of a set way of doing things.

In fact, I tend to get in fights with editors over my use of antiquated punctuation. Specifically, I frequently enjoy stringing tons of clauses together using semicolons. Then the drama begins: I’ll submit a story full of them; the editor will go nuts; I’ll resist; they’ll fight back; and in the end, they’ll remove just about any sentence that vaguely resembles the one I’m currently writing. Nicholson Baker once wrote a brilliant essay, reprinted in his The Size of Thoughts, called “The History of Punctuation.” He noted a ton of really weird pieces of punctuation that once were considered grammatically correct, but have vanished like the dodo. One of them was a colon followed by an em-dash — i.e. ” :— “. How cool is that? I’ve considered using stuff like that in stories, but I’m afraid my editors’ heads would simply explode.

(Update: Franco Baseggio wrote me to note that Eugene Volokh has issued a very well-researched riposte to Huffington’s article. Volokh checks the 1989 Webster’s Dictionary of English Usage, the 1996 New Fowler’s Modern English Usage, and the 1985 Harper Dictionary of Contemporary Usage, and finds they all accept the use of apostrophes for pluralization. “Seems to me that if the Language Police want to publicly accuse someone (even an anonymous someone), they should be quite sure that their targets are in fact guilty,” he notes.

Point well taken — though personally, apostrophic pluralizations still just kinda look wrong to me.)

Interesting meditation by Randy Cohen, “The Ethicist” in the New York Times Magazine. Someone wrote in to ask if it’s ethical to Google someone they’re dating. His reponse includes this riff:

The Internet is transforming the idea of privacy. The formerly clear distinction between public and private information is no longer either/or but more or less. While the price of a neighbor’s condo may be a matter of public record, it’s a very different kind of public if it’s posted on the Internet than if it’s stored in a dusty filing room open only during business hours. This distinction does not concern the information itself but the ease of retrieving it. (And new technology brings this corollary benefit: you can now be consumed with real-estate envy in the privacy of your own shabby home.) With this change comes a paradoxical ethical shift where laziness, or limiting yourself to insouciant Googling, is more honorable than perseverance, as in hauling yourself down to the municipal archives, say.

It’s an obvious point, but a good one. And makes me think about John Pointdexter’s totally berserk Total Information Awareness Project. Any sane person is (rightly) worried about with Pointdexter’s desire to capture and preserve tons of commercial data about your everyday life — credit-card, travel, medical and school records, among others.

But in a way, Google’s already doing this, in a much more benign way. It frequently pulls together amazing bits of data, connecting the dots of your life — or your date’s life. Which is partly why people have been able to use the Web, and Google, to give Pointdexter a taste of his own medicine. According to this great story at Wired News:

Online pranksters, taking their lead from a San Francisco journalist, are publishing John Poindexter’s home phone number, photos of his house and other personal information to protest the TIA program.

Matt Smith, a columnist for SF Weekly, printed the material — which he says is all publicly available — in a recent column: “Optimistically, I dialed John and Linda Poindexter’s number — (301) 424-6613 — at their home at 10 Barrington Fare in Rockville, Md., hoping the good admiral and excused criminal might be able to offer some insight,” Smith wrote.

“Why, for example, is their $269,700 Rockville, Md., house covered with artificial siding, according to Maryland tax records? Shouldn’t a Reagan conspirator be able to afford repainting every seven years? Is the Donald Douglas Poindexter listed in Maryland sex-offender records any relation to the good admiral? What do Tom Maxwell, at 8 Barrington Fare, and James Galvin, at 12 Barrington Fare, think of their spooky neighbor?”

His peers hated him; he didn’t think much of them either. And now that Stephen Soderbergh has made a version of Stanislaw Lem’s sci-fi novel Solaris, the 81-year-old Polish writer kinda hates it. Jeet Heer of the National Post has written a terrific piece summarizing the life and complex, frequently-misunderstood work of this sci-fi titan. A sample:

Among other things, ”Solaris” is a veiled attack on Marxism and its claim to have replaced religious mystery with a science of human history. Solaristics, the systematic study of the planet’s ocean, is said to be a rational pursuit - but it’s really, Kelvin notes, just ”the space era’s equivalent of religion: faith disguised as science.” He adds: ”Contact, the stated aim of Solaristics, is no less vague and obscure than the communion of the saints, or the second coming of the Messiah.”

Check the piece out; if more critics wrote this intelligently about science fiction, more people would read it!

Though maybe the tide’s turning. There’s been a recent boomlet of articles praising Philip K. Dick and noting how eerily prescient was his superparanoid vision of the world. Laura Miller wrote a terrific essay for the New York Times Book Review a while back — “It’s Philip Dick’s World, We Only Live in It” — and back in the summer, an op-ed piece in the Times noted that Dick was the perfect author for our highly-surveilled times.

Apparently, Google News led with a press release today. As CBS Marketwatch notes:

On Tuesday, a news release from Schaeffer’s Investment Research, highlighting Best Buy and Circuit City, was the top “story” on Google’s business-news page. Press releases often include significant information, no doubt. But most living, breathing editors would be chagrined to see that type of snafu on their pages. At that moment in time, on Dec. 17, the Schaeffer’s release topped the story about New York prosecutors securing their first guilty plea in the case against Tyco.

But, really, what are we to expect? Google assigned that typically hallowed job of story placement to a software program — a secret sauce of algorithms.

I’ve been interested watching the cackling glee of reporters as they catch Google News in its many small errors. Because of course, two things are totally obvious here: 1) Newsbots like Google News will never totally supplant traditional newsgathering. Nonetheless, 2) reporters have done a simply enormous amount of handwringing over this possibility.

Why? I think reporters’ dread is a submerged, nigh-Freudian fear. Google News may mess up every once a while, but most of the time it’s sufficiently good that it showcases just how lame most real newspapers are. The newsgathering skills of most reporters — and their inverted-pyramid style — are so deeply programmatic and devoid of creativity that they’re as close to robotic as you can get.

Sure, reporters may be getting freaked out by automatons. But then again, it takes one to know one.

There’s an absolutely masterful piece at The New Republic by senior editor Jonathan Chait, about the new conservative campaign to demonize those so poor that they don’t pay taxes. It leads by describing a recent Wall Street Journal article:

To wit, a recent lead editorial titled “THE NON-TAXPAYING CLASS.” A reader unfamiliar with the Journal’s editorial positions might read this headline and assume it refers to ultra-wealthy tax dodgers. But no — the Journal, of course, approves of such behavior. The non-taxpayers it denounces are those who earn too little to pay income taxes: “[A]lmost 13 percent of all workers,” the editorial fumes, “have no tax liability. … Who are these lucky duckies?” In typical Journal fashion, the editorial is premised upon a giant factual inaccuracy — it completely ignores sales and excise taxes, which consume a huge share of the working poor’s income. But what makes the editorial truly exceptional is the reasoning underlying it. The Journal complains that low taxes on the poor are “undermining the political consensus for cutting taxes at all.” For instance, the editorial considers the example of a worker who earns $12,000 per year, and, after noting bitterly that he pays less than 4 percent in income taxes, concludes, “It ain’t peanuts, but not enough to get his or her blood boiling with tax rage.” In other words, the Journal wants to raise taxes on the working poor so that they will have more “tax rage” and thus vote for Republicans. Once in office, of course, those Republicans would proceed to cut taxes for the well-off. (Indeed, according to the Journal’s logic, they couldn’t cut taxes on the poor because that would just lead them to stop voting Republican.)

The piece gets even better and funnier after that. Go read it; you have to register (for free), but I swear to god it’s worth the hassle merely for this one superb piece.

Check out Gawker — a very cool new blog, devoted to chatty, catty gossip about Manhattan! I love this stuff … more proof of the interesting small publishing-biz possibilities that flow out of blogging. They find tons of excellent tidbits of New York life, such as this bit from the New York Observer:

Drunken Shopping

The gift shop at the Times Square W has developed an ingenious new retail strategy: ensure that your shoppers are drunk when they enter the store by putting it next to the bar. Then allow—even encourage—your customers to continue downing cocktails once inside, as the inhibition leads to promiscuous AmEx Centurion Card use. Most of the customers are male and tend to buy things for women—the ones they’re dating, the ones they’re sleeping with, the cocktail waitresses, the sales associates. Anyone, really. But women do it, too. “We have a Fabien Baron snowboard,” says sales associate Caitlin Sheehan, “and a drunk woman came stumbling out of the bathroom one night and asked if it’s a belt—and it’s so totally not.”

(This item courtesy Boing Boing!)

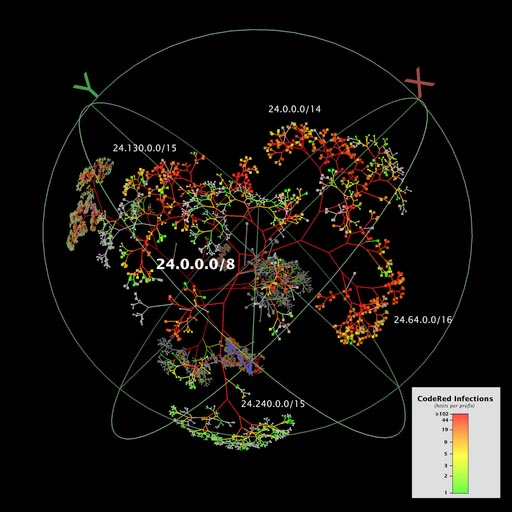

Wow. Dig this totally beautiful graphic! It’s a graphic representation of how the Code Red computer virus spread throughout the Internet. Some geeks at the University of California pulled it together; it’s printed in the White House’s otherwise almost unreadably-dull “National Strategy To Secure Cyberspace” comment paper.

When I showed it to a friend on a discussion board, he asked: “Are you sure it’s not a lung getting emphysema?” Which is, of course, precisely the point: It’s the Internet getting emphysema.

(Side note: this graphic is so huge it is temporarily stretching the column size of my blog horizontally, but I’m kind of digging the look.)

Ever been browsing for books at Amazon and notice the “recommendations” area? I’m talking about that section on each page where it says “Customers who shopped for this item also shopped for these items.”

It’s a bit of artificial intelligence. Amazon keeps track of what books each customer is browsing, and uses “collaborative filtering” to automatically detect patterns. So if a bunch of people who browse The Nanny Diaries are also browsing, say, I Don’t Know How She Does It, then presto — Amazon’s artificial-intelligence agent will make the link between the two titles, and let you know.

But here’s where it gets fun. Recently, an Internet-security expert was looking at a Pat Robertson book when he looked down to see “Customers who shopped for this item also shopped for” … The Ultimate Guide to Anal Sex, by Bill Brent. I’m not kidding: the screenshot is here.

Pranksters, it seems, had hacked the system. It’s not hard to do. All you do is go to page for the Pat Robertson book, then click over to the new title you want to link it to. Do this a couple dozen times yourself, and get a few dozen friends to do the same. Soon, the filtering mechanism will sense the pattern, and bingo!

It’s a great lesson about machine intelligence. Corporations continually surround us with A.I. tools, and sort of hope we’ll just be in awe of them — and not take the time to find out what’s under the hood. Eventually, of course, we do, and we discover the paradox of A.I.: That the strongest-seeming artificial intelligence is usually based on very simple techniques. Faced with the spectacle of Christian anal sex, Amazon had to go in and manually break the link:

“It seemed to us that this is a rather curious juxtaposition of the two titles,” said Amazon spokeswoman Patty Smith.

The Robertson book now links to “clean underwear” and “ladybug rain boots,” which actually to my mind is far more alarming, but whatever.

Okay, that’s an inflammatory statement. But consider the recent ImClone scandal. The company was working on a hot new cancer drug, their stock flew up to $80, and all the shareholders were in heaven. Then the FDA alerted ImClone executives that their drug — Erbitux — would not be approved for 2002. Breaking all insider-trading laws, ImClone CEO Sam Waksal alerted his family, dumped his own stock, and even contacted celebrity friend Martha Stewart, who sold $230,000 before the news got out and the stock tanked … leaving everyday investors holding the bag.

These sordid, venal details are all well known. And many reporters and pundits have thus concluded that Waksal and Stewart and all the other greedy executives were up to no good, and that ImClone got what it deserved.

Unfortunately, there’s a whole other bunch of people that got screwed: Cancer patients. In a brilliant story for United Press International, Mary Culpeper Long notes that cancer scientists and advocates worldwide think Erbitux is not a scam. It’s the real deal, a genuinely good piece of science and engineering that might save lives:

According to Dr. Harmon Eyre of the American Cancer Society, “there’s no question about the fact they’ve opened a new era.”

But Erbitux’s success relies on ImClone’s success. And ImClone’s success relies on its executives operating in honest, good-faith, above-board ways. When Waksal screwed around with his insider trading, he risked messing up ImClone so badly that Erbitux might never make it to market. So you can see where this is going …

The success of Erbitux and other ground-breaking new medical treatments seems to be inextricably tied to the behavior of corporate executives and the health of their companies. As a result, the health of Americans, in this case those suffering from cancer, is not only in the hands of scientists — but of corporate executives as well.

Precisely. When Sam Waksal speed-dials Martha Stewart so they can save a couple hundred thousand bucks and afford a more stupidly lavish winter vacation, they’re not just being greedy. They’re also potentially killing people with their self-serving actions.

Of course, we could be more sympathetic to Waksal. ImClone is a free-market enterprise, after all; Waksal was running a company, not a charity, and there’s nothing in his charter that says he has to serve mankind. Quite the contrary. A public company has only one Asimovian prime directive: By law, it must “maximize shareholder value” or get hauled into court. So to be fair to Waksal, he’s only doing what businesspeople always do — playing a risk-taking game in hopes of making more dough. Fair enough.

But one could also ask: Does this arrangement actually serve society? Should we really be developing life-saving drugs using the same marketplace that develops Nike Shox, the Terminator movies, and Cheetos? The free market can be a quite nifty thing, but it frequently forces CEOs to skate on ice so thin it risks destroying their corporations. When lives hang in the balance, is this such a hot idea?

(Note: Zach of Zoombafloom wrote an excellent critique of this posting; check it, and my windy response, out here.)

A few days ago I wrote about Danny O’Brien’s algorithm for washing dishes — illustrating the weird complexity of everday tasks.

Andrew Wu posted a comment pointing this totally excellent site by Don Norman — where Norman studied the algorithm of how we use toilet paper!

Norman had the usual problem we all faced: We never replace the toilet paper until it’s dwindled to the end of the roll. And if that happens to be your final roll — you’re out of luck. So he tried to solve this problem by installing two toilet-paper holders side by side, the same way public bathrooms have them. Interestingly, it didn’t work:

We discovered that although we now had two rolls instead of one, the problem was not solved. Both rolls ran out at the same time. Sure, it took twice as long before the rolls emptied, but we were still stuck with the same problem: no more paper. We had discovered that the switch to two rolls meant we had to use more sophisticated behavior: the algorithm for tearing of paper mattered.

After some self-observation and discussion, we discovered that three different algorithms were in use: large, small, and random.

Algorithm Large: Always take paper from the largest roll.

Algorithm Small: Always take paper from the smallest roll.

Algorithm Random: Don’t think — select the roll randomlyWe had assumed that Algorithm Random was most natural. After all, we had bought the dual-roll holder specifically so that we wouldn’t have to think. But were our selections truly random, we would chose each roll roughly equally, so they would both empty at the same time — or close. Algorithm random is not the one to use. To use toilet paper requires thought.

Our self-observations revealed that we really didn’t use the random algorithm — people are seldom random. The most natural: that is, we soon discovered, was to reach for the larger roll. Alas, consider the impact. Suppose we start with two rolls, A and B, where A is larger than B. With algorithm large, paper is taken from A, the larger of the two rolls until its size becomes noticeably smaller than the other roll, B. Then, paper is taken from B until it gets smaller than A, at which point A is preferred. In other words, the two rolls diminish at roughly the same rate, which means that when A runs out of paper, B will follow soon thereafter, stranding the user with two empty rolls.

Algorithm small turns out to be the proper choice. With algorithm small, paper is always taken from A, so it gets smaller and smaller until it runs out. Then paper is taken from roll B, which is full size at the time of the switch.

Yikes. We never realized that you had to be a computer scientist to use toilet paper.

Here’s an an interesting story about artificial-life fish.

(I can’t believe I just typed that last sentence. Did my mother sit me on her knee when was a child and say, “one day, son, you’ll write about artificial-intelligence fish”? No, she did not. God in heaven, I was supposed to be a lawyer or something. Anyway.)

Ahem. The point is, there’s a cool story at The Feature about Dali, Inc. — a California firm that has developed a platform for mobile artificial-life constructs. According to the story, they’re ideal for use on mobile platforms, much like intelligent versions of Tamagotchis. Dali’s first version of this is a set of virtual aquariums (aquaria?) where you can run a portable, artificial fish that interacts online with others. I’m downloading it now to give it a whirl.

This reminds me of a rumor I once heard about the ill-fated Dreamcast game Seaman. As you may recall, Seaman was an artificial-intelligence “pet” that you talked to via a voice-recognition microphone system. It remembered things about you, grew up, and would engage you in increasingly complex conversations.

The rumor is this: Apparently, the makers of Seaman originally envisioned it as a networked PC game — where each player’s Seaman fish could go online and “talk” to the other fish, finding out what other fish were learning from their owners. Then your Seaman would come back, expontentially smarter from its contact with other Seamen, and freak you out by displaying its new knowledge. “Funny you should X,” your Seaman might tell you, “because a lot of other people are saying Y.” Yikes!

I have no idea if this story is true, but it sounds true. Heh.

The New York Times Magazine just came out with its now-annual “Big Ideas” issue — where they offer almost 100 pieces on the biggest new ideas that defined 2002.

I wrote a bunch of the science and technology ones. Here are the links — with a short description of each:

News That Glows: Our digital devices continually interrupt us, breaking our concentration by demanding our attention — with email, phone calls, and instant messages. “Ambient information,” in contrast, takes the reverse approach: It creates device that display information in the background — as shifting colors and patterns that we register subconsciously.

Open-Source Begging: Karyn Bosnack rang up over $20,000 in debt, and couldn’t pay it back. So she set up a begging site and asked for donations … and over 2,000 people donated. Welcome to “open source begging” — a movement that applies the distributed zeal of Linux to the time-honored sport of holding out a tin cup on the sidewalk.

Outsider math: Scientists have spent 3,000 years searching for the way to prove whether a very large number is prime. Even the best and brightest number theorists couldn’t figure it out. But this year, a little-known scientist in India — who isn’t even known as a number theorist — cracked the problem, with the aid of two undergraduates. Why did the answer come from so far out in left field?

The Pedal-Powered Internet: Over in Laos, dirt-poor farmers don’t have phone lines, computers, or even electricity. Yet in a few months, they’ll be getting on the Internet. How? With a bunch of cobbled-together parts, a brilliant use of wifi, and a level of ingenuity that would have impressed Robinson Crusoe.

Smart Mobs: Scientists have known about the emergent behavior of hive-style insects for years. But now mobile devices are letting humans act in the same way — in “smart mobs,” groups that aren’t controlled by any single person, yet move like they have a mind of their own. (Read my piece, and go to Howard Rheingold’s site for even more Smart Mobbery goodness.)

Umbilicoplasty: The latest body part to go under the knife? The navel. Apparently, midriff-exposing clothes have become so prevalent that cosmetic surgeons are getting increasing requests from women who want to reshape this body part that has previously been hidden. According to one academic study, we’ve even developed a navel aesthetic — a cultural sense of what the “perfect navel” looks like.

Warchalking: Hobos used to leave symbols chalked on walls to let each other know where a free meal could be had. Earlier this year, British designer Matt Jones developed similar symbols for wifi — ways of showing where wireless Net connections are open for sharing.

Dig this: Some artists in Amsterdam have created the Realtime Project, where they track the movements of participants all day long, via GPS. They hand out tracking devices to anyone who wants to be part of the group — and then plot out their movements on maps.

The end result? These incredibly spooky traces of a human life, as it goes about its business in the city. There’s a cyclist, who ranges all over the place; a marathon runner, who goes from one end of the metropolis to the other; and a tram driver.

They’re oddly beautiful. They almost look like neural pathways through the brain — humans as the electrons flowing through the city-computer.

But these maps are also quite politically revealing — because this, dear reader, is your future in about five years. As we speak, mobile companies are working frantically to roll out technologies that will let them pinpoint the location of phone handsets down to a few meters. It’s called “location-based services,” and the goal is, in part, to offer you some fun toys — like the ability to pull out your phone and have it tell you where the nearest ATM or Italian restaurant is. But it’s also far more sinister, since the phone companies will be able to report your position to anyone who pays for the info, too.

So try this on for size: Imagine what it’s like when your boss knows where you are, all day long. Hell, you don’t even have to imagine it. Over in Hong Kong, the Pinpoint Company has released Workplace — a tool that tracks the location of workers’ mobile phones all day long. Employers will be looking at maps not much different from the Amsterdam project: Your pathways, for all to see.

There’s a cool story in the New York Times Science section about a reverse Turing Test. Instead of the classic Turing test — a contest where the computer tries to dissemble as a human — it’s a test that poses the opposite condition: The humans try to prove that they’re actually, well, human.

The story begins with Yahoo. A few years back, they were having problems with spambots. Spambots were logging onto free Yahoo mail accounts and sending out piles of crap — often hundreds of times a second. Yahoo seemed powerless to stop them; how do you prevent a robot from signing up for an account?

By imposing a test that separates humans from robots — definitively. After all, there are certain skills humans have that computers have never been able to emulate:

… in many simple tasks, a typical 5-year-old can outperform the most powerful computers.

Indeed, the abilities that require much of what is usually described as intelligence, like medical diagnosis or playing chess, have proved far easier for computers than seemingly simpler abilities: those requiring vision, hearing, language or motor control.

“Abilities like vision are the result of billions of years of evolution and difficult for us to understand by introspection, whereas abilities like multiplying two numbers are things we were explicitly taught and can readily express in a computer program,” said Dr. Jitendra Malik, a professor specializing in computer vision at the University of California at Berkeley.

Precisely. Computers can multiply million-digit numbers in an instant, but do an incredibly crappy job at recognizing shapes like bees or cars or words. Humans are precisely the opposite. So Manuel Blum at Carnegie Mellon University developed a test called the Completely Automated Public Turing Test to Tell Computers and Humans Apart, or CAPTCHA. A student of his wrote — Luis von Ahn — wrote a progam that takes a random word out of the dictionary, then streches and skews it a bit. Computers can’t recognize the skewed shape; but humans can easily read the word. Yahoo now uses this test for all its free email: If you can figure out their test words, you’re a human and get an account. Otherwise, you’re a ‘bot, and they lock you out.

It’s incredibly cool. But it’s also already being hacked — as I wrote in Wired magazine two months ago. It seems that spammers have figured out an end-run around the Yahoo system:

Here’s the weird thing: Some purveyors of porn developed a way to fight back. They rewrote the spambot code so that when the bots reach the visual recognition test, a human steps in to help out. The bots route the picture to a person who’s agreed to sit at a computer and identify these images. Often, insiders say, it’s a hormonal teen who’s doing it in exchange for free porn. The kid identifies the picture, the spambot takes the answer, and – bingo – it’s able to log in. “It’s the only way we know for getting around the picture test,” says Luis von Ahn.

And the kicker:

Now consider how deeply strange this is. Instead of a machine augmenting human ability, it’s a human augmenting machine ability. In a system like this, humans are valuable for the specific bit of processing power we provide: visual recognition. We are acting as a kind of coprocessor in much the same way a graphics chip works with a main Pentium processor – it’s a manservant lurking in the background, rendering the pretty pictures onscreen so the Pentium can attend to more pressing tasks.

… Techies from Nikola Tesla to Bill Gates are famous for cheerily prophesying the day when computers will do all the drudge work, leaving us humans to dedicate our magnificently supple brains to “creative” tasks.

Except, as our machines get smarter and faster, our much-vaunted creative powers may not be so valuable. What’s more useful, in man-machine systems, is our flexibility – our ability to deal with periodically messy, wrenching situations. We won’t be doing the brain work; we’ll be doing the scut work.

Early today, I was having breakfast with my girlfriend Emily in the West Village in Manhattan. I wanted to get a haircut, so I asked her if she knew of a cheap place I could go nearby. We both wracked our brains, but we couldn’t think of one; even though we walk around the neighborhood all the time, we didn’t pay enough attention to the stores that we’d noticed a barber or discount hair salon.

But hey — I had my Danger Hiptop with me. So I whipped it out and Emily did a Yahoo Yellow Pages search for “haircut” in our area code. Cool, I thought; this is a great example of location-based mobile services — sorting data based on where you’re standing.

“Hey,” said Emily, peering at the tiny Danger screen. “There’s a Supercuts nearby! Oh, cool — it’s on Sixth Avenue, right north of 3rd Avenue. That’s right where we are! Wait a minute, that’s … oh … “

She trailed off and looked out the window. And there, right across the street, was the Supercuts we were reading about online.

Nice.

I’m as cyborg-positive as the next geek; I look forward to using wearable computers to help me navigate the world. But consider how totally pathetic this was. WE DIDN’T EVEN HAVE THE COMMON SENSE TO LOOK OUT THE DAMN WINDOW. No, my first instinct was — let’s check the Net. In a mobile world, we need data to help us recognize what’s sitting physically right under our noses.

Every once in a while I feel like I’m living in a New Yorker cartoon.

How do you shoot a film like Lord of the Rings — which regularly has scenes of 10,000-Orc armies clashing with an equally massive sprawl of elves, humans and dwarves? Well, you could try to create computer-generated Orcs one by one, and figure out ways to animate them. But as it turns out, that makes the armies seem curiously stiff and scripted.

The solution? Use A.I. A New Zealand programmer for the movie created Massive, a program that gives each Orc a bit of artificial intelligence — and turns them loose. Each individual Orc tries to kill opponents and stay away from bad situations, much like the A.I. opponents in games like Quake or Half-Life. The result is a scene that has the same level of realistic chaos you’d get if you had 10,000 actual human extras acting it out:

Like real people, agents’ body types, clothing and the weather influence their capabilities. Agents aren’t robots, though. Each makes subtle responses to its surroundings with fuzzy logic rather than yes-no, on-off decisions. And every agent has thousands of brain nodes, such as their combat node, which has rules for their level of aggression.

When an animator places agents into a simulation, they’re released to do what they will. It’s not crowd control but anarchy. That’s because each agent makes decisions from its point of view. Still, when properly genetically engineered, the right character will always win the fight.

“It’s possible to rig fights, but it hasn’t been done,” [Stephen] Regelous [creator of Massive] said. “In the first test fight we had 1,000 silver guys and 1,000 golden guys. We set off the simulation, and in the distance you could see several guys running for the hills.”

It’s a neat examplar of what I call the new “military/entertainment complex.” It used to be that the military innovated — and perfected — the technology that was later used by entertainment (the way, for example, ballistics algorithms and computer circuity developed for warfare were later used for video games). That’s frequently reversed now. As Wired noted several issues ago, the best A.I. work now is in video games and movies; they innovate, and the government follows. It’s a side-effect of cheapening computer power and the infinite-monkeys rule: If you have a million geeks working on kewl stuff for fun, they’re quite often going to develop toys faster and better than even NASA.

Have you heard of the “machinima” movement? Basically, it’s a bunch of film artists who realized two years ago that video-game 3D engines could be used to create animated films. After all, when you play a 3D game, the engine creates the scene on the fly. It takes some bits of data — i.e. what the environment looks like, what the characters look like, where they’re located and what they’re doing — and renders it as a moving picture. Most of the time, of course, the “action” is composed of gamers blowing the hell outta each other and spraying raw guts around the screen.

But it doesn’t have to be. The engine could just as easily be used to script a short animated film. Thus was born “machinima” filmmaking — an artistic movement that has spawned a web site with hundreds of films. They range from the original pioneering “lumberjack” comedies (the main characters were lumberjacks because the early Quake game-engine rendered an axe as each character’s base weapon; you couldn’t put it down!), to some really sophisticated Matrix rip-offs.

But here’s where it gets really weird. A while back, a British production house called Strange Company did a machinima version of … Percy Blythe Shelley’s poem “Ozymandias.” I’m not kidding. It’s an animated representation of the, er, action of the poem, such as it is. (You can download it the film here.) Sure, it may be kind of stilted — the “actor” in the film seems oddly modern, and thus out of place for the poem’s vintage, and the desert. But the atmosphere neatly captures the eerie desolation of Shelley’s original work. This easily one of the most innovative things I’ve ever seen done with poetry. And hey: Imagine if someone did this with the first book of Paradise Lost; with all the satanic fires and flying demons, it’s a natural for video-game rendering! Or how about Emily Dickinson’s nutsoid hallucinations? Or e.e. cummings? The mind boggles. Thankfully, Strange Company’s “Ozymandias” has been picked up already and praised by media ranging from the New York Times to Roger Ebert; I’m coming late to the party here.

I think I’m going to email this film to Joe Lieberman — as well as every other politician or pundit who constantly brays about how video games are turning people into violent, mindless drones.

(Oh, interesting update: here’s a “Director’s Diary” of the Ozymadias project, written by director Hugh Hancock. He has some very cool meditations on the aesthetic style of machinima, and how it’s not just a “poor cousin” of normal CGI animation.)

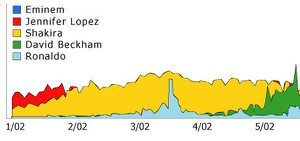

Google’s now-annual ranking of the Zeitgeist is out! For those of you who haven’t seen this yet, it’s Google’s redaction of the year’s most popular search terms. As usual, I find the whole thing fascinating: Which men, women, news events, games, TV shows, and memes dominated the English-speaking, Net-using world? Search engines have become the evolving rorschach blots of the age; hell, I wish I could view a real-time scrollbar of Google’s top searches on a daily basis.

Here’s the trend I find most interesting in this year’s Zeitgeist: Trillian hit number 13 on the “Top 20 Gaining Queries” — queries that grew the most this year. Trillian is, of course, the instant-messaging killer app: It lets you connect to ICQ, AOL IM, MS Messenger and Yahoo Messenger (as well as IRC, for all you l33t d00dz) all at once. It’s a great indicator of how messaging is becoming the de facto way people keep in contact — by pinging each other all day long, instead of composing back-and-forth emails.

It probably also foregrounds how important the “presence” aspect of instant messaging is becoming: The ability to know which of your posse is online, at any point in time. I use my Danger Hiptop a lot for that reason. When I’m on the road and want to know where everyone is, I zip for a second onto AOL IM and see who’s online; it gives me this nigh-ESP-like sense of my location in the datasphere.

Interestingly, “SMS” was the second-most popular “technology” search (after “MP3”, of course). Another excellent example of how messaging — via mobile devices — is finally hitting the mainstream in the U.S., after winning over the entire European continent. If everybody out there hasn’t already read Howard Rheingold’s Smart Mobs, run — do not walk — to get a copy and read it now. It’s breathtakingly prescient about where communications, and our social behavior, are going.

What’s in a name? Quite a bit, in the eyes of corporate America.

According to a story in today’s New York Times, two academics recently completed a fascinating experiment to test racism in the workplace. They sent out resumes to 1,300 help-wanted listings in Boston. The resumes were basically identical, except for the names: Half the resumes had names popular amongst white folks (like “Kristen” and “Brad”) and half the resumes had names popular amongst blacks (like “Tamika” and “Tyrone”).

The result?

Applicants with white-sounding names were 50 percent more likely to be called for interviews than were those with black-sounding names. Interviews were requested for 10.1 percent of applicants with white-sounding names and only 6.7 percent of those with black-sounding names.

Interestingly, the black-sounding names that received the worst call-back rate were “Aisha” (2.2 per cent), “Keisha” (3.8 per cent) and “Tamika” (5.4 per cent), compared to 9.1 per cent for “Kenya” and “Latonya”.

More disturbing still:

Their most alarming finding is that the likelihood of being called for an interview rises sharply with an applicant’s credentials — like experience and honors — for those with white-sounding names, but much less for those with black-sounding names. A grave concern is that this phenomenon may be damping the incentives for blacks to acquire job skills, producing a self-fulfilling prophecy that perpetuates prejudice and misallocates resources.

Cory Doctorow of Boing Boing is blogging some talks at the Supernova conference. He’s got a great redaction of Dan Gillmor’s discussion of the impact of various new technologies — P2P, wireless, blogging — on journalism:

First, “Old Media.” Then “New Media.” Now, “We Media” — the power of everyone and everything at the edge.

Sept 11 was the turning-point. Dan was in South Africa and got the same coverage the rest of the world did. Most of us couldn’t get to nytimes.com, but blogs filled in the gap. The next day we had the traditional 32-point screaming headlines and photos. But we also got, through Farber’s Interesting People list, links to satellite photos of the event, first person accounts from Australians explaining how it felt outside of America.

Blogs covered it, and then a personal email from an Afghan American that circulated on the Internet, got posted to blogs, made it onto national news …

Journalism goes from being a lecture to a seminar: we tell you what we have learned, you tell us if you think we’re correct, and then we discuss it: we can fact-check your ass (Ken Layne).

Dan’s new foundation principle: “My readers know more than I do.”

This is true for all working journalists, and not a threat, it’s an opportunity …

The new tools of new journliasm: Digital cameras, SMS, writeable web (blogs, wikis, etc), recorded audio and video.

Blogs are the coolest part of it: variety, gifted pros and amateurs, RSS, meme formation and coalescing ideas, real-time (heh — typing as fast as I can).

15-year-olds blog from cellphones today — they’re who I ask for tips on the future. Joi Ito blogs with his camera — so do smart-mobs. (David Sifry: A guy ran a marathon and blogged it from his Sidekick).

The next time there is a major event in Tokyo, there will be 500 images on the web of whatever it was that happened before any professional camera crew arrives on the scene.

That last point is so insanely true. Back when I was watching the World Trade Centers collapse in Brooklyn, I looked around the street. And everyone — every single person — had their mobile phone out and was talking on it, describing the horrific scene. And I realized later on: That was peer to peer newsgathering in action. The first news many people got of the WTC disaster came not from CNN, and not even from a web site, but from a friend calling them. Wireless data devices will massively amplify this trend in the next few years.

Heh. In a Wired News story today, there’s a piece about the culture of kids and mobile phones. Dig this incident between a mother and her daughter:

Dr. Cathryn Tobin of Toronto, Canada, said her three children — ages 10, 12 and 14 — all have cell phones because “it gives me a great deal of peace of mind to be able to reach them.”

She added that her youngest daughter, Madison, happens to be the most responsible with the phone. Madison always takes it with her and is constantly recharging it.

She is also quite savvy with it: One day Madison had a tiff with her mother. As she sulked in the back seat of the car, she punched a message on her phone. Some seconds later, her mom’s phone — in the front seat of the car — beeped and Tobin received this text message: “I’m sorry, mom.”

It makes me think of some of the covert ways I myself have used texting to communicate. Once, in a bar, I was sitting next to a friend, and we were talking to a couple we know. At one point, I wanted to say something privately to my friend — but figured whispering in his ear would look a little rude. So I just wrote a short message to him (using T9, I can touch-type on my phone without looking at it, holding it under the table) and sent it to him. His phone buzzed, he looked at the screen, and got the message quietly and privately.

But also, this piece makes me think … jesus, is it really such a great idea to hand radiation-emitting devices to young kids with growing brains? Granted, there’s no scientific proof that they cause any genetic damage or whatnot. But that’s partly because the phone companies frantically quash anything that comes up, and actively campaign to discredit any studies that unearth troubling findings. Earlier this year, I profiled Louis Slesin, the editor of The Microwave News, for Shift magazine — the article is here. Give it a read; Slesin is a smart guy with a scientific pedigree who’s been following the wireless industry for years … and he’s scared shitless.

I always wonder why libertarian or right-wing economists aren’t bigger fans of mainstream hip-hop.

I’ve just been watching J.Lo’s “Jenny From The Block,” and like much mainstream bling-bling hip-hop, it’s a libertarian wet dream. Conservatives have been trying to hone a message like that ever since the freakish excesses of wealth in the turn-of-the-century Gilded Age. During the First World War, when megarich dudes in New York were crushing pearls into their $200 glasses of wine to drink, wealth got a bad name. So after the stock market crash, you had a massive reaction against it — with taxes on the wealthy rising, unionization sweeping the country, and a general civic attempt to rein in the rampaging power of the top-one-per-centers. Ever since this WWII turnaround, the rich have been frantically beating back the bad press.

Their main message? We’re Just Average Folks. Sure, they might have billions in their bank accounts; they might destroy the lives of thousands of laid-employees at the stroke of a pen; they might spend $2,200 on gold-plated wastepaper baskets; but other than that, they’re just like you and me! Hence the constant, desperate attempts of intergalactically powerful CEOs in PR appearances to been seen driving a cheaper car, sitting around in their shirtsleeves, maybe drinking a can of Bud.

Still, this stuff has always seemed like what it is: Pretty phony. So why don’t they imitate J.Lo, and much of mainstream wealth-obsessed hip-hop? Just take the wealth and power, grind it in everyone’s face, and bitch them out for being jealous if they complain.

Consider the whole concept of “playa hater”. Conservative economists have never been able to come up with a phrase more elegantly and sneeringly smug. Nor politically powerful; there is simply no way to respond to a pop-culture charge of being a playah hater, an envious despiser of those who’ve worked hard for their massive wealth. And as J.Lo notes, she “used to have a little, now I got a lot” — as if the manna dropped from heaven as a reward for her clear superiority to all around her. We’re all from the same ‘hood; we all have the same roots; it’s just that you suck and I don’t. Merit: Another cherished bead in the libertarian catechism!

Of course, genuinely streetwise hip-hop is all about the other side of the economic equation: The brutal lot of the bottom-20-per-centers in society; the berserk and subtle racisms of everyday life; the fact that the rich keep most everyone else out of their clique by keeping high the price of admission to, say, the 92nd Street Y. But J.Lo’s style of glossy, breezy mainstream hip-hop pretty much out-Rands Ayn Rand. Hell, I could imagine Tom Delay striding across a campaign stage to the bouncy, saccharine venality “Jenny From The Block.” Let’s wait two years; maybe he will.

(Coolness alert: In the comments section, there are several extremely smart comments about this subject by Maura and Morgan.)

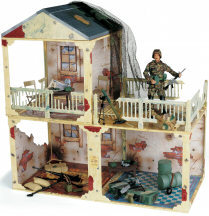

$44.99

Take command of your soldiers from this fully outfitted battle zone. 75-piece set includes one 11½”H figurine in military combat gear, toy weapons, American flag, chairs and more. Assembled dimensions; 32x16x32”H. Plastic. 10 lbs. Ages 5 and up.

(Thanks, Maura, for pointing this one out!)

A couple of days ago I wrote about the FurReal cat, and how much it creeps me out. Essentially, I argued that robot-looking robots are cool — like R2D2 — but ones that attempt to genuinely mimic life-forms (like that fur-covered, purring animatronic cat) just give me the heebie-jeebies.

As it turns out, I’m not alone. In the Collision Detection message boards, Alan Daniels posted a link to a superb essay that analyzes my creeped-out reaction. The essay,“The Uncanny Valley”, is by Dave Bryant and discusses the theories of Japanese robotocist Masahiro Mori. Mori analyzed human reactions to robots and monsters in sci-fi flicks, and developed a theory:

Stated simply, the idea is that if one were to plot emotional response against similarity to human appearance and movement, the curve is not a sure, steady upward trend. Instead, there is a peak shortly before one reaches a completely human “look” … but then a deep chasm plunges below neutrality into a strongly negative response before rebounding to a second peak where resemblance to humanity is complete.

This chasm — the uncanny valley of Doctor Mori’s thesis — represents the point at which a person observing the creature or object in question sees something that is nearly human, but just enough off-kilter to seem eerie or disquieting. The first peak, moreover, is where that same individual would see something that is human enough to arouse some empathy, yet at the same time is clearly enough not human to avoid the sense of wrongness. The slope leading up to this first peak is a province of relative emotional detachment — affection, perhaps, but rarely more than that.

Go check out the paper and dig the graphs Mori charted — our affection for robots rising as they become slightly more lifelike, but then plunging when they look almost, but not quite, human. The “uncanny valley” is that steep, sudden plunge on the chart: The place where robots suddenly seem not charming and kooky, but eerie.

And as Alan noted on his own posting:

I think I understand why the robotic cat creeps you out. Frankly, it creeps me out too. But, if the robotic cat looked absolutely 100 percent like a real cat, down to the the last purr and flick of the tail, I wouldn’t give a second thought to the fact that it’s a robot. Being a robot is fine, being a cat is fine, but when it tries to be something in the middle, it fails at both, and the overall effect is creepy and alien.

Today the Washington Post has a review I wrote of “The Support Economy” — a book by Harvard prof Shoshanna Zuboff and her husband, former Volvo CEO Mames Maxmin.

In essence, they argue that modern consumerism — which they think is a good thing — has created the high level of individualism in modern American culture. The problem now, they argue, is that consumers crave and demand a level of individualized, personalized service that corporations are not prepared to deliver:

The problem, Zuboff and Maxmin say, is that mass-production and “managerial capitalism” — the engines of the 20th century’s economic growth — succeed by ignoring the individual consumer’s desire. Economists normally assume that Henry Ford’s achievement was to standardize the car, making it cheap to mass-produce. But this isn’t entirely true: Ford’s true brilliance was to standardize the customers. They agreed to buy a Model T in any color, so long as it was black.

Ford’s production-line innovations unleashed a postwar boom in consumption. But this itself led to an unforeseen conflict: As Americans began to consume more, the authors argue, the act of consumption helped define them as individuals. Indeed, Zuboff and Maxmin believe that consumption is now completely central to identity: “Through consumption of experience — travel, culture, college — people achieve and express individual self-determination. No one can escape the centrality of consumption.” Regular Americans, they suggest, now crave the personalized service once accorded only to the rich. The two forces collide: Customers want the personal touch, but companies offer one-size-fits-all …

It’s an intriguing analysis. Could “individuality” — the very thing touted in Xtreme-life ads for Gatorade and dentures alike — actually be making consumers more dissatisfied and cranky?

I go on to say a bunch of more critical things about the book — i.e. “I’m not sure whether getting brilliant service from British Airways and Dell is really such a top-drawer concern for wage slaves making $12,000 a year at Costco” — but if you want to read the whole thing, it’s here.

Audi is selling this two-seater car for wealthy children aged six to eleven. It costs $10,250. Interestingly, that’s almost the U.S. poverty threshold for two elderly people.

Features: Equipped with a fully functional lighting system including head, tail/brake lights and turn indicators. An all weather fiberglass body. Rack & Pinion Steering. Full suspension, disc brakes, emergency brake, working horn, simulated dashboard & two padded seats which are adjustable. (Custom colors also available.)

Technical Specifications: Honda 4 Stroke Engine, 83 cc. 2.2 h.p. Pull Start. Transmission - 2 forward / 1 Reverse. Max speed is 13 m.p.h. (Speed is governable.)Dimensions: Overall Length - 87”; Width - 40”; Height - 30”

Free Shipping Within The Continental USA.

One Year Limited Warranty

Age range depends upon child’s ability.Adult Supervision Required.

Always wear a DOT approved helmet.

Vehicle is NOT street legal.

Dig this online game: Micheal Jackson drops a bunch of babies out of a hotel window, and you have to catch them!

It is, of course, totally hilarious. But there’s more going on here under the hood, I’d argue. This game is another one of the genre I wrote about for Slate a few months ago: “Games as a form of social comment.”

The game itself is not that addictive or even playable. At best, it’s a sort of intertextual swipe at the Kaboom-style games from days of yore. But playability or addictiveness is not the aim here; that’s secondary. The primary goal is to use the rhetoric of games to make a political or social comment — using game physics as a way to metaphorize a subject.

I mean, everyone and their cousin has written a piece decrying Jackson for endangering his child. But this is truly shooting a fish in a barrel. Jackson is irretreivably bonkers, has been for years, and everyone knows it; there’s no point belaboring the fact. But …

[BEGIN POMPOUS LANGUAGE/JARGON WARNING] … by avoiding the rhetoric of text (or that of video, today’s other dominant medium) with which everyone is familiar, and using the newer rhetorical style of gaming — with its odd fusion of animation, physics, and interactivity — this kooky little Jackson game makes a bunch of funny little points all at once: About Jackson’s clear insanity; about the weird position of those horrified fans who watched him dangle the kid (my god, is he going to drop it?); about how we also have this sick, can’t-tear-my-eyes-away fascination with the whole spectacle (part of us wants him to drop the kid). These are all things you can explore implicitly in a game, in a fashion that feels qualitatively rather different from other media. People call this stuff “new media,” but they don’t realize how profound it is to have a genuinely new medium. [END POMPOUS LANGUAGE/JARGON WARNING]

I will now pull my head out of my ass. Go play the game; it’s fun listening to the babies shriek as they plummet to the ground.

Back in the 80s, the brother of a friend of mine had an idea. “My brother came up with a word he was going to sell to the tire industry for millions of dollars,” says my friend. The word? “Gription”.

Turns out, he was ahead of his time. “Gription” is here! A quick Google search found that “gription” is now used to describe the feel of a golf-club shape, a brand of kid’s football (pictured above), and particularly hideous style of sandals.

Now I want to invent a word too. Any good ideas? Send ‘em in, and I’ll post them!

Danny O’Brien, the publisher of the way-kewl NTK newsletter, has been writing this hilarious algorithm of how to wash dishes:

Washing

The Current Item and the Queued Item are both washed. This involves completing a series of acts. The item has not been cleaned unless all of these acts have been completed. Some acts may be performed on a Queued Item, some acts may be performed on the Current Item, some may be performed on both. Acts vary according to the item. Acts should follow the order in which they are listed.

Cutlery

1. Dipped and shaken under basin water - Queued, Current

2. Areas of uncleanliness observed - Queued, Current

3. Unclean areas scrubbed clean - Current

4. Re-dipped - Current

5. Rinsed under cold tap - CurrentPots, Pans, Cups, Mugs

1. Dipped and shaken under basin water - Queued, Current

2. Areas of uncleanliness observed - Queued, Current

3. Handle (if any) of item scoured - Current

4. Outside bottom of item scoured - Current

5. Outside sides of item scoured - Current

6. Inside bottom and sides of item scoured - Current

7. Remaining unclean areas scrubbed clean - Current

8. Re-dipped - Current

9. Rinsed under cold tap - CurrentMain Program

1. Clean basin.

2. Fill basin with hot water. When almost full, add detergent.

3. Now drop five to ten pieces of cutlery from the Pile into the basin (depending on size and dirtiness). This will form your initial Soaking Stack

4. Apply General Rules continuously until all items are on the Draining Board

I love this stuff. This is exactly the kind thing I use when I explain to people why programming can be so hard, and why so many bugs exist. Because when you’re trying to describe how to do a task with total precision — which is what programmers have to do to computers — it’s almost impossible to think of everything. Eventually you forget to account for something, your program runs into something unexpected, and bang … it crashes. Bugs exist because the programmer assumes the computer, or the user, will “know” a piece of supposedly “commonsense” knowledge. But computers don’t know anything, and frequently, neither do users.

This is one reason that programmers sometimes have a weird contempt for users. Because to produce a bug-free program, they have to think about every single possible dumb-ass thing a user might do — including some very dumb things. Will the user try to hit a bunch of keys during a download? Will the user click the mouse on a few random buttons while the machine is trying to execute something? Essentially, the programmer trains himself to think of the user as a complete and total imbecile, who will screw everything up given half a chance. This isn’t because they’re mean. They have to think like this; it’s the only way to do their jobs well.

Sound cynical? Well, go back to that list of dish-washing commands. They all seem so blatantly, hilariously, almost annoyingly clear, right? As if they were written for an incredibly dumb child? Well, that’s how programmers have to think about the potent combination of a user and a computer: An incredibly volatile system that needs to be treated like a toddler.

Granted, not all programmers transfer this social formula to everyday life; they’re not always (or even very frequently) the inept nerds pop culture paints them to be.

But on the other thand, ever wondered how Bill Gates became such a strangely domineering, socially damaged freak? Wonder no more.

I first saw this cat in the current issue of Wired, and was immediately creeped out.

Technically, I ought to love the FurReal cat. It brings together two things I hold most dear — cats and robots! I love ‘em both. I have two cats and, frankly, not enough robots in my life.

But the thing is, I want my robots to look like robots. Consider this comment by the head of the toy division department of the Fashion Institute of Technology, in today’s Circuits section:

“You don’t want the technology in a toy to be visible,” said Judy Ellis, the chairwoman of the toy design department at the Fashion Institute of Technology. “The first robot pets were very cool-looking, but a child doesn’t relate to a shiny surface. A child can relate to a furry cat.”

Speak for yourself! I grew up waiting and waiting and waiting and waiting for computer technology to get cheap enough, and to rock hard enough, that I could finally have a personal robot. I watched all the craptastic B movies and I craved to own a shiny little tin-can like R2D2 that would follow me around. Or maybe something like Doctor Who’s K-9. That’s what a robot pet is supposed to look like, people!

But suddenly the future arrives … and it’s wrapped in fur. Ew. I mean, the whole point of a robot-looking robot is that its alien-ness is a technique almost like Russian formalism; it makes the world strange, the better to help us understand humanity. A shiny metal robot is a mirror in which we reflect upon our human-ness. (Plus, you can program it to bring you a beer and shit.)

So the idea of wanting robots to seem like natural living creatures? That’s straight out of Philip K. Dick’s Do Androids Dream of Electric Sheep?, a nasty little dystopia. In that book, some hideous war-related fallout — sufficiently grim that Dick never explains what it is — has killed off so many life-forms that only the insanely rich can afford to own actual live pets. Everyone else makes do with animatronic cats and dogs and hens and cows — and then tries desperately to pretend to their friends that the animals are real. It’s just about the most depressing book I’ve ever read in my life. And now Hasbro’s basing a marketing campaign on the idea.

I’m going to bed.

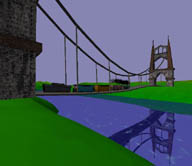

Dig this crazy game Pontifex! You build suspension bridges, try to get ‘em to stay up, and marvel at the wickedly-cool physics of cars going over them.

I’m still not terribly good at this, but I love it. There’s something really old-school about my taste in video games, I’ve decided. I like the ones that foreground the idea of “playing with physics.” Back in 1989, the American Museum of the Moving Image ran an exhibit on video games — part of which is online now — that talked about how early games were almost pure expressions of physics. The chips were so primitive, that’s really all they could do: Basic collision detection, rebound angles, and the like. So games like Asteroids or Pong had this very strong sense of playing inside the headspace of a computer chip. The joke that occurred to me, when I thought about this, is that “video games are what computers think about when we’re not around.”

This is what intrigues me so much about physics-style simulation games like this bridge game — and to a certain extent, space-based flying games. I like the idea of playing with physics, partly because it’s actually possible to render them with precision. Games like The Sims or SimCity slightly freak me out because the modelling is that of human life — and human life is so complex that inevitably, the sim relies on some rather sketchy and suspicious assumptions about people. The first edition of the Sims, for example (and am I on a Sims rant here or what?), explicitly notes that the two ways to make your Sim happy is a) to have lots of good human relationships, and b) to have lots of stuff.

Well, the former I agree with, but I can think of tons of examples where b) simply isn’t true. For personal or political or even spiritual reasons, some people are made miserable by having lots of stuff, and some people take great joy in not only not having stuff, but hacking or tweaking or even outright wrecking other people’s stuff. Like those creepy Sim suburbs I ranted about a few days ago, the political assumption that stuff = good is not really a “sim” of human behavior. It’s a fiction of consensus — an attempt to reduce human behavior into something simple enough for a game to deal with. And you know, geopolitics being the way it is right now, one would think it would be patently obvious to us that people frequently have massively different visions of what constitutes The Good Life. So why are our games so reductive in their view of society?

Sure, I realize this is insanely didactic and super-nerdy and weird of me. But this is why I prefer physics-style sims. You can lie about people — but not about Newton’s laws.

(A tip of the hat to El Rey for this one!)

Well, Nissan Motor Co. doesn’t — though it’s suing like hell to try and get it. And in doing so, it is proving a little-discussed fact: That dot-com brand names are becoming more useless every day.

Here’s the backstory. In 1991, a guy named Uzi Nissan founded a North Carolina company called Nissan Computer Corp. In 1994, he registered the domain name Nissan.com. In 1995, as you might imagine, a worried Nissan Motor lawyer wrote a letter expressing “concern” about Uzi Nissan’s use of the domain name — but didn’t demand the entrepreneur give it up.

Now dig this: The following spring, Uzi Nissan also registered Nissan.net to start an ISP. That’s right; even though Nissan Motor had expressed concern about someone else owning Nissan.com, the executives remained so totally clueless about the Net that — nearly one year later — they hadn’t gotten around to registering Nissan.net. And keep in mind, this was a full 18 months after Josh Quittner famously registered McDonalds.com and wrote about it for Wired. This whole domain-name thing was not a big secret or anything. How gormless can a major multinational firm get?

Of course, after a gazillion stories about the Net in appeared in Fast Company and the Red Herring and other florid dot-com media, eventually even Nissan wised up to the importance of the Web. So in 1999 — precisely at the peak of dot-com hysteria — it met with Uzi Nissan to try and buy the name off him. He wanted millions; the company refused to play ball. So the lawyers were summoned, and now Nissan Motor Co. is suing to have the domain transferred to them.

It’s a really interesting snapshot of the old domain-name wars, which I’d thought were pretty much over. It’s quite ironic, too — because if Nissan Motor Co. had just waited until this year, I bet Uzi Nissan would have sold that domain for probably $200,000, or even less.

Why? Because nobody pays big cash for domains any more. And why don’t they do that? For a seldom-discussed reason: Because very few Net users type in raw URLs any more. People just go to Google and pump in a search term instead. Looking for Nissan cars? You pump “Nissan” into Google. And what do you get? That’s right — Nissan Motor Co.’s official site, as the first two hits. It doesn’t matter that they don’t have a site at Nissan.com; you find them anyway.

Consider what’s happening here. Google has effectively decreased the importance of owning one’s own precise dot-com brand name. When people use Google, they find you no matter what your URL is.

Want more proof? Collision Detection itself is a good example. Back before I launched this, I wanted to get CliveThompson.com. But I couldn’t; it’s owned by someone in Britain, possibly someone who’s trying to sell it to the incredibly rich Sir Clive Thompson, founder of the giant British pest-control/security/conferencing/tropical-plants/hygiene/parcels-delivery empire (no, I’m not making that up).

So I figured, okay, if I can’t have CliveThompson.com, I’ll just use Google to do an end-run around this. I’ll set up a blog that has my name on it, and see whether the whole machinery of linking will work. Sure enough — after a couple of months, this blog started appearing as the top hit in Google for “Clive Thompson”. It doesn’t matter who owns the actual “brand name” of Clive Thompson. This place is where you wind up.

Which reminds me that I should post more often, heh. I’ve been busy lately! Honest!

I'm Clive Thompson, the author of Smarter Than You Think: How Technology is Changing Our Minds for the Better (Penguin Press). You can order the book now at Amazon, Barnes and Noble, Powells, Indiebound, or through your local bookstore! I'm also a contributing writer for the New York Times Magazine and a columnist for Wired magazine. Email is here or ping me via the antiquated form of AOL IM (pomeranian99).

ECHO

Erik Weissengruber

Vespaboy

Terri Senft

Tom Igoe

El Rey Del Art

Morgan Noel

Maura Johnston

Cori Eckert

Heather Gold

Andrew Hearst

Chris Allbritton

Bret Dawson

Michele Tepper

Sharyn November

Gail Jaitin

Barnaby Marshall

Frankly, I'd Rather Not

The Shifted Librarian

Ryan Bigge

Nick Denton

Howard Sherman's Nuggets

Serial Deviant

Ellen McDermott

Jeff Liu

Marc Kelsey

Chris Shieh

Iron Monkey

Diversions

Rob Toole

Donut Rock City

Ross Judson

Idle Words

J-Walk Blog

The Antic Muse

Tribblescape

Little Things

Jeff Heer

Abstract Dynamics

Snark Market

Plastic Bag

Sensory Impact

Incoming Signals

MemeFirst

MemoryCard

Majikthise

Ludonauts

Boing Boing

Slashdot

Atrios

Smart Mobs

Plastic

Ludology.org

The Feature

Gizmodo

game girl

Mindjack

Techdirt Wireless News

Corante Gaming blog

Corante Social Software blog

ECHO

SciTech Daily

Arts and Letters Daily

Textually.org

BlogPulse

Robots.net

Alan Reiter's Wireless Data Weblog

Brad DeLong

Viral Marketing Blog

Gameblogs

Slashdot Games