Dig this: Isobella Jade is 5’2” struggling model who recently wrote a memoir about her life experiences — entirely on the computers at a Manhattan Apple store. Apparently Jade has been homeless, so she doesn’t have a place to own or store a computer. One day she walked into the Apple store to check her Yahoo mail, and started writing notes about her life. She never stopped, wrote an entire book, aned now she’s shopping it around.

FishbowlNY broke the story yesterday, and today they conducted an interview with her via email — also written, presumably, from the Apple store. An excerpt:

I stood at the Apple Store infront of a 17inch computer the one that I call “mine.” I had been going to the store at the time for about 9 months. As I stood in heels for up to 2 hours at a time, my contacts and my mouth would go dry and without a blink of the eye I would pace back and forth from writing about my experiences to DOING the experience and the daily tasks of responding to emails, researching modeling jobs and places to send my comp cards. I was always in a rush running into and out of the Apple Store up to 3 times a day … I have laughed out loud over it, typed frantically and received comments from employees to slow down and breath while I was staring at the screen viciously, vigorously. Sometimes asking a business man how to spell a word or two …

I love it. Personally, I’d been waiting for someone to get $10 million in VC funding for a Web 2.0 startup they created entirely inside an Apple store. You’d have pretty excellent business cards, eh?

This is just brilliant: A new plug design, angled out from the wall to make it easier to plug things in or out. It was created by University of Notre Dame student Julia Burke, and won an IDSA award this year:

The PLUG-IN’s upward-angled faceplate allows users to better orient themselves and a cord’s prongs before bending over or reaching behind furniture. This creates a direct sightline from the human eye to the faceplate and minimizes the distance necessary for a person to extend. It also provides additional leverage when removing of a difficult plug.

Technically, Burke invented this for the elderly — who have trouble bending far enough down to shove a 90-degree plug into the wall. But the ergonomics here are so dementedly superior to normal wall sockets that I want a full set of these for my household. Right. Now.

Why don’t Americans like soccer? In the current issue of the Weekly Standard, Frank Cannon and Richard Lessner write an acerbic takedown of soccer in which they sneer at various conventions of the sport, such as the fact that it requires the use of the head to bonk the ball — an act “contrary to all human instinct,” the writers aver, which is why sensible games like football or hockey encase the athletes’ heads in helmets. They also argue that “any game which prohibits the use of the hands is contrary to nature.”

Deliberately hissy stuff, so as you’d imagine, pro-soccer bloggers reacted by angrily calling Cannon and Lessner ignorant, cultural-isolationist boors who just don’t get it.

But here’s the thing: Cannon and Lessner do make one extremely interesting observation about soccer. Soccer matches rarely end in high scores, they point out, and the proportion of gameplay that draws near either goal is smaller than in many other sports. As they write:

These infrequent occurrences in which the soccer ball approaches the end zone — where goaltenders wile away their time perusing magazines, trimming their fingernails or inspecting blades of grass — rarely result in a shot on goal. Most often the ball ends up high over the goal, missing everything by 20 or 30 feet. These “near misses” typically send the fans into paroxysms; TV announcers scream themselves hoarse. Then the players mill about the field for another 20 or 30 minutes or so and the goaltenders return to their musings before the ball returns, like Halley’s comet in its far-flung orbit, for another pass in the general vicinity of the goal.

Mostly soccer is just guys in shorts running around aimlessly, a metaphor for the meaninglessness of life. Whole blocks of game time transpire during which absolutely nothing happens … It’s like gazing too long at a painting by de Kooning or Jackson Pollock. The more you look, the less there is to see.

Sure, they make their point snarkily. But they’re quite right that game design reflects the national soul. Americans are predisposed to enjoy games where the rules encourage lots of scoring. Soccer wasn’t architected that way, so Americans don’t like it. Baseball, basketball, and football, in contrast, were designed to allow for lots of scoring — and they are thus huge hits in America, a country obsessed with toting up manichean victories.

I seriously doubt Cannon and Lessner are even aware of the existence of ludology — the philosophy and design of play. But they have nonetheless illustrated precisely why ludology is such a powerful way to understand national cultures, and the differences between Americans and Europeans. It also helps you understand why the writers are so damn snarky, and their critics so correspondingly nasty: It’s because ludology is one of the most gut-level, passionate areas of philosophy, and play is so central to our identities. People can be tepid about whether or not they like a book or a movie. But nobody is is wishy-washy about play. A game either totally rocks or totally sucks, and there is no phase transition between the two.

(Thanks to Arts and Leisure for this one!)

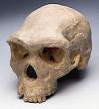

I’m coming late to this, but what a gnarly little statistic: Researchers have calculated that Neolithic residents of Britain had a 1 in 14 chance of being bashed in the head, and a 1 in 50 chance of dying from the injury.

Rick Schulting of Queen’s University Belfast co-directed the study, which looked at the remains of 350 skulls from the period. Apparently people were just going totally medieval — uh — on each other back then, as the New Scientist reports:

Most of the fatal blows were to the left side of the head, which would make sense if two right-handed people were fighting, says Schulting. The injuries were mostly caused by blunt objects, although some of the skulls seem to have been hacked by stone axes and there is some evidence that ears were chopped off.

Yikes.

(Thanks to Arts and Letters Daily for this one!)

Behold the Electrolux Death Ray! California artist Greg Brotherton makes these incredibly gorgeous faux-retro weapons by cannibalizing 1950s gear like Electrolux vacuum cleaners. The EDR is a vacuum on top of a Steelcase chair base; when you fire it, a halogen bulb in the center lights a bunch of acrylic rods ruby-red, while the whine of six German siren whistles — powered by the vacuum’s pressure — fills the air with Cold War dread.

As the promotional write-up explains:

Hailed as the Rolls-Royce of atomic weapons, the Electrolux Deathray is the ultimate blend of devastation and design. Custom made to order in your choice of atomic chrome or military field colors the standard Deathray is the perfect addition to any arsenal. Upgrades include atomic control rods in cobalt, ruby or emerald and a choice of firing options from a pencil thin vortex ray to a single pulse moon smasher.

Check out the videos of the Deathray in action, complete with Plan-9 cheesetastic special f/x!

And sure, okay, this is traditionally arch-ironic hipster humor. But Brotherton has neatly identified something I’ve always loved about 1950s industrial design: Everything looked like a weapon. Vacuum cleaners, pens, big-finned cars, cigarette cases, wall clocks, you name it. With all the sweeping chrome, steampunk lug-nuts and aerodynamic lines, it was as if everything had been rigorously designed to achieve escape velocity and rain death upon the commies.

If one can read the spirit of an age in its industrial design, it makes you wonder what you’d learn by scrutinizing our tools today. Ascetic, monklike ipods; cars that look like trilobites. What’s it all add up to?

(Thanks to Brian Corcoran for this one!)

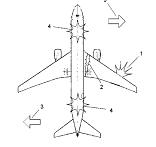

An inventor in Bangkok has just patented a new way to safely crash-land a plane: By blowing one of its wings off and sending it into a spiralling dive, which — he claims — would give it a helicopter-like soft landing.

Here’s how it works, in his words:

A signal from the altimeter will detonate the explosive charges, with the thrust forward 1, causing a controlled separation of the wing, and the thrust needed to cause a very strong force in the rearward direction of this wing. The explosive devices will provide enough to break the wing from the airplane at the fuselage 2. This force will then, make the whole airplane spin on a horizontal plane and in the direction of the missing wing 3. This spin will cause the following: [0006] (1) The spin will cause centrifugal force between the wing that remains intact and the fuselage. Thus maintaining the horizontal plane of the airplane while in a spiral spin. [0007] (2) The spin will cause the intact wing to work in the same manner as the rotor of the helicopter, producing lift, so that the airplane slowly decreases altitude, instead of a free fall descent.

“Attention, passengers. In the event of a need for a crash landing, please return to your seats, fasten your seat beats, and stow your tray tables — before we rip the left wing off and turn this airplane into a shrieking, plunging PINWHEEL OF DEATH.”

(Thanks to the New Scientist Invention Blog for this one!)

I love it: Some engineers at the Tokyo Institute of Technology in Japan are developing a device that you can point at a smell to record a sample of it — then “play” it back later on. As the New Scientist reports:

Somboon’s system will use 15 chemical-sensing microchips, or electronic noses, to pick up a broad range of aromas. These are then used to create a digital recipe from a set of 96 chemicals that can be chosen according to the purpose of each individual gadget. When you want to replay a smell, drops from the relevant vials are mixed, heated and vaporised. In tests so far, the system has successfully recorded and reproduced the smell of orange, lemon, apple, banana and melon. “We can even tell a green apple from a red apple,” Somboon says.

This thing might actually work. The lab’s web site is down, but Google’s cached copy of their experiment page shows that they do seem to be having some success with “recording and reproducing citrus flavors”.

Nonetheless, I can’t stop giggling. There is no technology more justly mocked than Smell-O-Vision. Yet in a weird may, maybe there’s actually a use for an olfactory iPod. Smell is powerfully related to memory, so one might wonder whether this device could actually be useful as a memory aid: When you’re trying to remember the details of a situation, you record its smell, and then play it back later as a cognitive priming device.

Then again, when smells are removed from their context they can be kind of creepy. I recently visited a lab where they develop artificial flavors — extracting the essence of the flavor and smell of, say, buttered popcorn, or bacon, or a hamburger. And let me tell you, when you hold up a tiny stick with a dab of hamburger scent on it and your nose is overwhelmed by the smell of an actual, real burger, it’s strangely unsettling: It feels less like the wonderful odor of a burger joint and more like you’re experiencing a psychotic break.

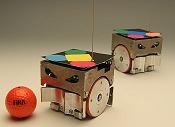

Strikers jostling for control, goalkeepers frantically fighting to save their teams, international reputations at stake: Yes, folks, it’s once again time for that other international soccer competition — the RoboWorld Cup!

Beginning tomorrow, millions of fans — or at least dozens — from around the world will converge on Germany to watch tiny cube-shaped robots fight over a ball. And what an excellent ball, eh? Check that thing out: A regulation golf ball painted orange. This is the punk rock of soccer.

As many who have witnessed a MiroSot game will testify, the excitement always runs high especially when two strong robot-soccer teams meet. During the match, the robot players autonomously tackle many unfamiliar situations that arise due to the different strategies, hardware and control software technologies employed in the opponent robot players. Like in a FIFA World Cup soccer match, no one knows for sure which team will win until the final whistle.

I hope they put video online.

Back in 1994, Mari Kimura introduced the world to a whole new way to play the violin — using subharmonics. Basically, she can produce notes that are up to an octave above and an octave below a violin’s normal range, transforming it from a glass-like synthesizer to a booming cello. (Here’s an audio file of her playing an octave below open G, the violin’s lowest note.)

The thing is, not even Kimura can explain exactly how she does it. So a bunch of scientists at the University of Tromso in Norway recently brought her into an echo-free chamber to record some subharmonic playing; they’re currently studying the data and trying to figure it out. As they explain in this press release:

“Kimura makes a violin string vibrate in a totally new way. In physics we call this a driven and damped non-linear system, which we are particularly preoccupied with in our research. By understanding the way she plays the violin, we are contributing to understanding of similar processes in the nature”, says Hanssen. [snip]

“I have done this for ten years, and the researchers in US and Japan have tried to figure it out for as long. I don’t really know what it is I do, because I have an empirical approach to it. It all happens by the method of trial and error,” says Kimura.

Kimura has written several primers on her technique in the past, and taught several students how to do this — but neither she nor other subharmonicizers has adequately described what the hell they’re doing with that bow. She can describe the fretwork fairly well, as she does here, but the bow magic seems to be a matter of “feel”.

I’ll be intrigued to see what the Norwegian folks find out! Kimura has written several pieces specifically for a subharmonically-played violin — some links to audio files are here online — and they’re quite creepily beautiful to listen to. My favorite is the ending to this snippet of “Subharmonic 2nd”, where Kimura fades out on a quiet blizzard of subharmonic noise; it sounds like a couple of ghost violins muttering at you from a different plane of existence. Check out the way-kewl special notations she’s invented for scripting subharmonic playing.

If she ever plays this stuff live in New York I am so there.

The government of Spain today apparently declared support for the right to “freedom and life” for great apes — making it the first world legislature to recognize the rights of non-human entities.

It seems they were swayed by the lobbying of the Great Ape Project, the brainchild of philosopher Peter Singer. Singer’s point has long been that the category of “animals” is weirdly broad and imprecise: Both chimpanzees and snakes are classified as animals, but for Singer this makes no sense, because chimpanzees are closer to humans than to snakes. Anyway, Singer’s organization includes a “Declaration on Great Apes” that says that apes should not be killed except in self-defense, that they are not to be “arbitrarily deprived of their liberty,” and ought not to be subject to torture. I’ll avoid the obvious Gitmo-Bay joke and point out that I’m not sure whether Spain has is adopting Singer’s Delcaration outright, because the Reuters story doesn’t clarify it.

Interestingly, animal-rights thinkers argue that Spain’s actions constitute a philosophical tipping point. As Reuters wrote:

The Spanish move could set a precedent for greater legal protection for other animals, including elephants, whales and dolphins, said Paul Waldau, director of the Center for Animals and Public Policy at Tufts University.

“We were born into a society where humans alone are the sole focus, and we begin to expand to the non-human great apes. It isn’t easy for us to see how far that expansion will go, but it’s very clear we need to expand beyond humans,” Waldau said.

They may also want to look at the potential rights of our celaphopod overlords. As Eric Scigliano documented in a wonderful Discover magazine piece in 2003, octopuses are so freakishly smart that they’ll fashion toys out of debris in tanks just to stave off boredom. And given that we are eventually going to be totally 0wnz0r3d in the Giant Squid Uprising, it would only be prudent buy some goodwill by enshrining the rights of Architeuthis via the UN.

See that red box above? It’s an “anti-colorblindness” test: It contains an image that only the colorblind can see. Aaron Clauset developed the idea and designed a few images that you can check out on his web site; as he notes …

If it’s painfully obvious that there’s another image there, then you’re probably colorblind to some degree in the red part of the spectrum. Can’t see it? Try looking at the white space at either side of the image, you might be able to see the object by using your contrast-sensitive rods (which are concentrated more heavily in your peripheral vision). Don’t give up if you can’t see it, that’s the whole point — this is an *anti* colorblind test.

I love this idea: A way of regarding colorblindness so that it is a special ability, not a visual handicap. Keep in mind that my replication of his “red” test above has it shrunk down to half-size; the effect probably doesn’t work unless you go look at the original test at full size here. He also shows the solutions here, in case you’re still scratching your head wondering what secret the pictures contain.

(Thanks to Reddit for this one!)

Behold the “Teamgeist” — a soccer ball with such crazy-new physics that it is apparently annoying the heck out of World Cup goalkeepers. Adidas recently invented the Teamgeist at the behest of FIFA, which wanted a ball that would give superior control when kicked. Whereas a normal soccer ball is composed of 32 panels stitched together, the Teamgeist is made of only 14. This makes it rounder and less likely to pick up water on a rainy day, and improves the kicking surface.

Whatever one thinks of Adidas’ PR on this one, the players themselves are noticing a difference, as a story in Deutsche Welle points out:

“With long shots, it floats and moves a lot which makes it difficult to read,” said Brazilian superstar Ronaldinho. “It’s perfect for attackers.” [snip]

“Something is obviously going on with the ball,” says USA’s Kasey Keller, who was the victim of the curling terror unleashed by the Czech Republic’s Rosicky on June 12. “It’s a very light ball. The difference is only a fraction of a second but it’s a big difference. This ball has a wobble. It’s not an easy ball to catch.”

“At the last World Cup there were hardly any spectacular long-range goals,” Keller added. “We had two in the first game so something is obviously going on.”

I’m afraid I haven’t been watching the World Cup (not because, like most Americans, I think soccer/football is boring, but because I don’t watch any sports). Anyone have any thoughts on whether this ball has actually had the effect pundits claim it has?

Hedonists, rejoice! A couple of Columbia University researchers have found that in the long run, people tend to regret having missed out on opportunities for pleasure — and they wish they hadn’t been so diligent about working. What’s more, our attitudes reverse over time. In the short run, we’re proud of our ability to work hard and delay gratification. But years later, we regret that choice.

For example, in one of the Columbia experiments, subjects were asked recall two points in time — one week ago, and five years ago. They were asked whether they were working or relaxing at that point in time, and whether they regretted it. When the point in time was a week ago, the workers were happy they were toiling, and the relaxers regretted their lassitude. When the point in time was five years ago, though, the opposite was true: People regretted being in the office, and wished they’d been slacking.

In another experiment, students who’d just come back from their break were polled. The ones who’d partied it up regretted their actions — while those who studied were virtuously smug. But when asked to recall the spring break from the previous year, suddenly more students regretted their choice not to party. When alumni were asked to recall their spring breaks of 40 years ago, the results were even starker: Those who hadn’t been doing beer shots out of a barber’s chair were striken with remorse.

Check out that diagram above: It’s a Chart of Regret from the spring-break example. The top line shows the intensity — on a scale from 1 to 7 — of how much subjects regretted “having too much self control”. It’s an unmistakeable trend: As they get older, people are increasingly pissed that they were such goody two-shoes when they were young.

Of course, these findings totally violate how we’re told about to behave. It also violates how we think about ourselves. When asked to define their values in the abstract, people regularly claim that delaying gratification gives you a better life. Yet when asked to think about specific incidents in our lives — as these researchers did — those values crumble, obviously.

Why the reversal? Why do we opt for virtue in the short term, but prefer vice in the long? The reason, the researchers suggest, is in the mechanics of guilt: It’s intense and painful emotion in the here-and-how, but fades over time. As they write:

Whereas guilt is an acute, hot emotion, missing out is a colder, contemplative feeling. Therefore, indulgence guilt is expected to predominate in the temporal proximity of the relevant self-control choice, but subsequently diminish over time.

Now there’s a conclusion that will deeply freak out social conservatives.

Though one could also question whether we’re seeing the whole picture here. When you poll people who are in college and have graduated from it, you’ve got a distorted landscape; these are people who have already opted for an activity (college) that inherently involves putting aside some pleasure for long-term gain, and who are going to benefit from it. But what it if you took a bunch of people who’d dropped out of college or high-school for hedonistic reasons — i.e. not because they couldn’t afford to attend, but because they found it boring — and polled them ten years later? Would you get the same response? If they’d failed to get good jobs and were scrabbling to get by, would their youthful trade-off still seem worth it?

Last week, Wired News published my latest video-game column — in which I talked about the rise of “episodic” play: Games that come out in short instalments, like TV shows. General musing on the literary style of episodic narrative, going back to Charles Dickens, ensues. The column is online for free here at the Wired News site, and a permanent copy is archived below!

Same bat-time, same bat-channel

How “episodic” play could save gaming

by Clive ThompsonA good TV series is a well-honed machine. This is particularly true of a mystery or action series like 24 or Lost: Each week you get fiendish plot twists, Elizabethan character conspiracies, hinted-at clues — then an agonizing cliffhanger. No wonder we wind up planning our schedules around these shows, plunking down on the couch to get our weekly fix.

What if video games worked that way too?

Does our language change our conceptions of how time works? In a neat Cognitive Science paper called “With the Future Behind Them”, some researchers document the intriguing culture of the Aymara Indians of the high Andes. Their language inverts our traditional metaphors of time, as a column in today’s New York Times Science section notes:

The Aymara call the future qhipa pacha/timpu, meaning back or behind time, and the past nayra pacha/timpu, meaning front time. And they gesture ahead of them when remembering things past, and backward when talking about the future.

These are not mere mannerisms, the researchers argue; they are windows into the minds of Aymara speakers, who have a conception of future and past that is different from just about everyone else’s.

The authors say the Aymara speakers see the difference between what is known and not known as paramount, and what is known is what you see in front of you, with your own eyes.

The past is known, so it lies ahead of you. (Nayra, or “past,” literally means eye and sight, as well as front.) The future is unknown, so it lies behind you, where you can’t see.

It’s such a neat concept. It reminds me of the way that conservatives — real, serious conservatives, by which I mean the Burkean type — regard the future as so exhaustively predicted by the past that it is far more important to examine the latter than to speculate about the former. That picture above — which I pulled from the PDF of the paper, online here — is of an Aymaran talking about the future of his community. He’s gesturing behind him as he says “it seems we are going toward the worst”. Ironically, the Aymarans are indeed dying out, so before long their language will vanish into that vast expanse of the past that lies, I suppose, before us.

I’m coming to this one late, but some wits at The Economist recently plotted out some interesting trends in razor-blade design. They charted out the dates in which single, double, treble, qadruple and quintuple-bladed razors emerged, and noticed that the rate of increase in the number of blades per razor-head has been accelerating. It took 80 years for the industry to add a second blade, about another 15 to add the third, then only two or three years between the four-bladed Schick Quattro and the five-bladed Gillette Fusion. The story is here, and Avram Grumer wrote a funny post pointing out where this is all headed:

Now, that power-law curve predicts 14-bladed razors by the year 2100, but that’s not the interesting curve. The interesting curve is the hyperbolic one, for two reasons: One, it matches the real-world data. And two, it goes to infinity in 2015. And how are you going to get an asymptotically-accelerating number of blades onto a razor? Why, you’d need godlike super-technology to do that.

Friends, it’s clear what’s upon us: The Gillette Singularity — the moment at which the act of shaving becomes so radically unlike any shaving before it that history no longer provides us a guide to what lies before us.

Personally, the whole four- and five-blade thing kinda baffles me. If I try shaving with anything more than two blades, the bathroom turns into a total slaughterhouse — blood and guts on the ceiling. I have yet to find a razor that shaves as well as the original, simple Gillette Sensor. Yet the sad fact is that as the razor industry jetpacks its way into the eschatalogical glory of infinitely-bladed heads, the companies have scaled back production of their creaky two-bladed models. Locating an actual package of Sensor razors in New York here is like trying to find a rotary pay phone.

(Thanks to Majikthise for this one!)

Think that email you’re sending off to your coworker is pretty funny? According to a recent study (PDF link), the odds are that she’ll find only half as funny as you do.

A trio of business scholars ran an interesting experiment: They took a bunch of people and had them write emails in various tones of voice, including “sarcastic” and “funny”. Then they sent them to a handful of recipients. It turns out that the recipients were frequently unable to correctly read the tone that the writer intended: Only 56% were able to accurately figure out that an email was sarcastically phrased.

Things fared even worse with humor. The email writers were asked to compose a funny email, and to rate it on an ascending scale of 1 to 10 — both in terms of how funny they thought it was, and how funny they predicted their readers would find it. On average, the writers rated their own hilarity level at 8.16, and predicted that readers would find them a laff-a-rific 7.27. In reality, the stone-faced recipients thought the emails were only 3.55 funny.

Obviously, there are a couple of conclusions here. Either a) people are crappy writers; b) people are crappy readers; or c) a subtle mixture of the two governs all online communiations, ensuring that we have no clue what the hell anyone else is trying to say. Nor is this problem solely limited to email; as the authors note:

Although our focus here has been on e-mail miscalibrations, we believe that the overconfidence we have documented here likely characterizes a wide range of rapidly emerging media types. Chat room, instant messaging, text-based gaming environments — all have been touted for their superiority to asynchronous text media such as e-mail because of the dynamic nature of the discourse and ability to provide rapid feedback … In fact, we suspect the synchronous and rapid nature of these mediums may actually increase the rift between senders and receivers. [italics in original]

Heh. World of Warcraft chat-channel trash-talk — now there’s a medium of rigorously crafted prose.

The Harvard Business Review has discovered online worlds and avatars —- and, in this piece online here, is set palpably drooling at the marketing opportunities therein. It’s a pretty funny piece; since it’s written for biz-dev weasels who are total n00bs to gaming culture, the authors are forced to adopt the instantly-recognizable prose style of much mainstream gaming writing: Paper-dry, Britannica-class descriptions of the freaky weirdos they encounter (people who wear “provocative” outfits in Second Life! Or even dress as — get this — animals!)

Anyway, the point is, once the article is finished with its obligatory Andy-Rooney spit-takes, it makes some points both fascinating and horrifying. Avatar-based worlds, they point out, are a terrific way to understand what your consumer wants, because as Henry Jenkins notes in a quote, “Marketing depends on soliciting people’s dreams, and here those dreams are on overt display.” Then there’s the matter of the growing piles of greenbacks people are spending online: $5 million in US dollar equivalents each month for avatar-to-avatar virtual purchases in Second Life alone. But where the lid really rips off, the authors note, is in data collection. In a virtual world, everything an avatar does — literally everything — is loggable and monitorable. Thus …

… the amount of marketing and purchasing data that could be mined is staggering. An avatar’s digital nature means that every one of its moves — for example, perusing products in a store and discussing them with a friend — can be tracked and logged in a database. This behavioral information, organized by individual avatar, aside from being priceless to marketers in the long term, could be processed immediately. An avatar clerk might appear from behind the counter and offer to answer an avatar customer’s question — questions the clerk would already know because they would have been gathered and recorded in the database.

Furthermore, the avatar clerk might automatically adjust his or her behavior to become more appealing to the avatar customer. Research conducted at Stanford University’s Virtual Human Interaction Lab has found that users are more strongly influenced by avatars who mimic their own avatars’ body movements and mirror their own appearance. This virtual manifestation of an old sales trick makes avatars potentially, if insidiously, powerful salespeople. Using a simple computer script, the selling avatar clerk is able to subtly and automatically tailor its behavior — its gait, the way it turns its head, its facial features — to the avatar buyer’s, thus making the clerk seem more friendly, interesting, honest, and persuasive.

Jesus, now even the marketing trolls are reading and quoting Snow Crash. We’re doomed.

(Thanks to El Rey for this one!)

One hundred nations are collaborating on building the world’s largest seed bank — a storage facility for 2 million different varieties of plant life. It’ll be located in frozen Svalbard, up in a section of Norway located above the Arctic Circle, and it’ll be hermetically sealed with a couple of feet of concrete. The idea, as the Washington Post reports, is to provide a backup copy of our biodiversity — so when the planet gets schmucked by a nuclear holocaust, an asteroid strike, or global warming, we can reboot and try again.

Apparently there are already lots of seed banks around the world, but they’re all pretty insecure and have collections that are really incomplete:

“Svalbard is meant to be the bank of last resort,” said Pat Mooney, executive director of ETC Group, a Canadian civil society organization focused on food security. “It’s where you go if you can’t go anywhere else. It’s the backup for the whole world.”

Archaeologists have just discovered what appears to be the oldest portrait of a human face ever — a cave drawing from 27,000 years ago. Here’s the cool thing, though: The drawing is a set of sharp black lines of positively modernist abstraction. Writing in The Guardian, Jonathan Jones notes that the face looks oddly like a Modigliani, and goes on to ask an interesting question:

Why did the first artists draw like Picasso? It has to be because of their attitude to the face, to their own embodiment and that of the people they lived with — it has to be because of how they saw human beings specifically, because this is very different from the way they painted animals. Stone Age artists could paint with a verisimilitude that takes your breath away; the horse panel in the Chauvet cave, older than this drawing, is covered with acutely observed heads of aurochs (extinct relatives of cattle) and horses whose tufty manes are painted with a clarity Da Vinci would have admired.

Even back 27,000 year ago, Jones suggests, humans realized there was something unique about humans — something that made them different from the animal kingdom. Indeed, Jones figures this cave drawing challenges the idea that portraiture as an art form only congealed in the Renaissance, as an offshoot of the growth of Western individualism. Or to put it another way: How much of a sense of the “individual” did people have 27,000 years ago?

Of course, one could also point out that the reason the cave painter looks like Modigliani is that Modigliani himself probably took inspiration from the stylized nature of cave drawings; or to put that another way, how modern is modernism?

Here’s some fascinating economic work: Recent studies show that if you graduate into a recession, it’ll hurt your earnings for the rest of your life.

The New York Times recently reported on this work, and it’s pretty freaky stuff. In one study, Paul Oyer of Stanford tracked the earnings of biz-school grads from 1960 to 1997. His results? As the Times reports:

He found that the performance of the stock market in the two years the students were in business school played a major role in whether they took an investment banking job upon graduating and, because such jobs pay extremely well, upon the average salary of the class. That is no surprise. The startling thing about the data was his finding that the relative income differences among classes remained, even as much as 20 years later.

The Stanford class of 1988, for example, entered the job market just after the market crash of 1987. Banks were not hiring, and so average wages for that class were lower than for the class of 1987 or for later classes that came out after the market recovered. Even a decade or more later, the class of 1988 was still earning significantly less. They missed the plum jobs right out of the gate and never recovered.

Apparently, America is no longer a country where you can start at the bottom of the greasy pole and work your way up. Nope — nowadays, people care about where you start, so if your first job out of college needs to be impressive and high-earning right off the bat. If it is, then you lock into a cycle of self-perpetuating mythology: “Hey, that guy’s paid so much, he must be good. We should offer even more and hire him away.” The dismal reverse is equally as true.

In one sense, this is just another manifestation of the winner-take-all dynamics that emerge in our power-law-dominated world. It would also explain why the folks who graduated in the early 90s — in the trough of a nasty recession — were slagged as “slackers”, while the “Generation Y” kids who graduated into the Caligulan dot-com boom of the late 90s were revered as energetic, idealistic, forward-thinking, woof woof, ribbit ribbit. They were tarred — or starred — by their economic context.

Mind you, since I personally graduated in 1992 in Toronto, when unemployment was a mindboggling 25% for people under 25, I’m trying not to dwell too much on this, heh.

Ever played Sudoku? You and about 40 gazillion other people, my friend. The number-logic game has become the breakout hit of the puzzle world in the last year, and New York magazine recently asked me to write a story that examines its Xtreme popularity.

The story is based on a profile of Will Shortz — the brilliant editor of the New York Times’ crossword puzzle. There’s a documentary released this week called Wordplay, and it’s devoted to Shortz and his community of crossword fans; in the piece, I talk about what makes a good crossword and counterpose it with Sudoku — the anti-crossword, a puzzle that doesn’t require you be culturally aware, literate, or even numerate.

The story is online free here, and a copy of it is below for permanent archive!

The Puzzlemaster’s Dilemma

Will Shortz’s crosswords are about to make him a word-nerd movie star. But Sudoku is making him rich.“This is a great puzzle,” says Will Shortz.

The crossword editor for the New York Times is giving me an advance peek at the Sunday puzzle he will publish a week later. “See, now this grid is jam-packed with fresh uses of language,” Shortz says, sitting in his home office amid stacks of reference books like Brands and Companies 1995 and The Encyclopedia of American Cars. “MRPEANUT, great answer. GIJOE, great! Only five letters, yet it has a J in the middle — very pretty.” Shortz has only one complaint about the puzzle: It uses the abbreviation nle for “NL East,” which he thinks is too obscure. It only took him a few minutes to deftly scribble in a new tangle of words. AAMES, of “Willie Aames,” turns into AIMAT; AMMO becomes OLIO; and NLE becomes ULA — a “diminutive suffix,” such as at the end of “spatula.”

I’m coming late to this — I’m coming late to everything, because I haven’t blogged for two weeks! — but last week Wired News published my latest video-game column. It was about Jaws Unleashed, and the subtle pleasures of playing as another species. The story is online free here, and a permanent copy is archived below!

Animal Instinct

by Clive ThompsonThe bikini-clad swimmers have no clue what’s coming.

Deep beneath the surface of the water, I glide like a cruise missile of death, quietly circling my prey and picking my angle of attack. Then I sense an opening and bam: I shoot upward, sink my teeth into one wriggling leg, and begin ripping my prey back and forth. Blood mixes with the frothy water-bubbles as the shrieking begins, and pretty soon I’m snacking on yet another resident — oops, former resident — of Amity Island.

That’s right: Jaws is back, my friends. Except this time, instead of cowering in terror in my theater seat, I get to control the shark, and I have to admit — it’s an unexpectedly neat experience.

MillionArtists is fundraising project with an interesting way of gathering donations: Everyone who gives money can choose the color and placement of single pixel on a massive online canvas. In theory, as thousands or millions of people donate, it’ll take shape as a picture.

But a picture of what? Heh — interesting question. A story in the Globe and Mail points out that at the moment, there are only 88 donations, so the pixels are so insignificant on the sprawling digital canvas that they “could easily be mistaken for dirt on the screen.” (That’s a possibly lovely, if dispiriting, metaphor for the philanthropy’s always-heroic but never-enough attempt to solve the world’s problems.) You can check the painting out in real-time here; a snapshot of the current pic, shrunk down to 1/10th size, is above. The guys running the project describe the aesthetic of the project thusly:

I see the point regarding the “meaningful and pleasant look” and have to agree that our picture may become just “white noise” … On other hand I’d compare this “random pixel location” method to Jackson Pollock’s method of “dripping paint from cans with holes in the bottom”, but I must agree that mine is ever more extreme: when Pollock used his own senses to make what he believed reflects his art vision, I’m going to use sense of color of a million different people. Will I get the “meaningful and pleasant look” at the end? I do not know. Will it show the feelings of the million people? I believe it will.

A while back, I wrote a piece for Slate about whether “collaborative art” was possible — hundreds or thousands of people working, hivelike, on a single project, each unaware of the intentions or desires of the others. I think it is indeed possible that a hive can produce art, but it all depends on the framing device. The device here is so open-ended that it’s likely to produce an entropic beige sludge. But hey — it’ll be an entropic beige sludge that has raised a bunch of money for charity!

(Thanks to Jonathan Kotcheff for this one!)

I'm Clive Thompson, the author of Smarter Than You Think: How Technology is Changing Our Minds for the Better (Penguin Press). You can order the book now at Amazon, Barnes and Noble, Powells, Indiebound, or through your local bookstore! I'm also a contributing writer for the New York Times Magazine and a columnist for Wired magazine. Email is here or ping me via the antiquated form of AOL IM (pomeranian99).

ECHO

Erik Weissengruber

Vespaboy

Terri Senft

Tom Igoe

El Rey Del Art

Morgan Noel

Maura Johnston

Cori Eckert

Heather Gold

Andrew Hearst

Chris Allbritton

Bret Dawson

Michele Tepper

Sharyn November

Gail Jaitin

Barnaby Marshall

Frankly, I'd Rather Not

The Shifted Librarian

Ryan Bigge

Nick Denton

Howard Sherman's Nuggets

Serial Deviant

Ellen McDermott

Jeff Liu

Marc Kelsey

Chris Shieh

Iron Monkey

Diversions

Rob Toole

Donut Rock City

Ross Judson

Idle Words

J-Walk Blog

The Antic Muse

Tribblescape

Little Things

Jeff Heer

Abstract Dynamics

Snark Market

Plastic Bag

Sensory Impact

Incoming Signals

MemeFirst

MemoryCard

Majikthise

Ludonauts

Boing Boing

Slashdot

Atrios

Smart Mobs

Plastic

Ludology.org

The Feature

Gizmodo

game girl

Mindjack

Techdirt Wireless News

Corante Gaming blog

Corante Social Software blog

ECHO

SciTech Daily

Arts and Letters Daily

Textually.org

BlogPulse

Robots.net

Alan Reiter's Wireless Data Weblog

Brad DeLong

Viral Marketing Blog

Gameblogs

Slashdot Games