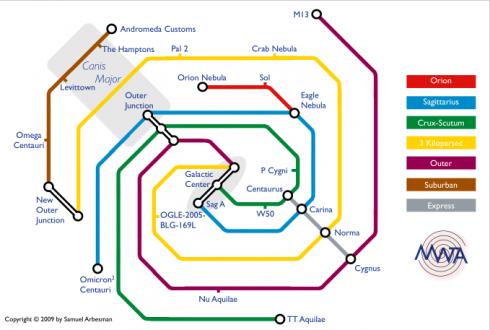

This one is really lovely. Samuel Arbesman, a computational sociologist at Harvard, has created the “Milky Way Transit Authority” — a London-tube-like map of our galaxy.

Our galaxy is unimaginably vast, and we really have no idea what is out there. We are discovering new planets in other star systems all the time, learning new facts about the galactic core, and even learning about whole new portions of the galaxy. This map is an attempt to approach our galaxy with a bit more familiarity than usual and get people thinking about long-term possibilities in outer space. Hopefully it can provide as a useful shorthand for our place in the Milky Way, the ‘important’ sights, and make inconceivable distances a bit less daunting. And while convenient interstellar travel is nothing more than a murky dream, and might always be that way, there is power in creating tools for beginning to wrap our minds around the interconnections of our galactic neighborhood.

I have attempted to actually make this map as accurate as possible, where each line corresponds to an arm of our galaxy, and the stations are actual places in their proper locations. However, I am not an astronomer or astrophysicist, so there are certainly inaccuracies, gaps, and room for improvement. If you have a suggestion, comment, or criticism, please to do not hesitate to contact me by emailing arbesman at gmail dot com.

(Thanks to Shareable.net for this one!)

Back in the late 90s, many newspapers reported this apocryphal exchange between Microsoft CEO Bill Gates and General Motors:

At a recent COMDEX, Bill Gates reportedly compared the computer industry with the auto industry and stated: “If GM had kept up the technology like the computer industry has, we would all be driving twenty-five dollar cars that got 1,000 miles per gallon.”

Recently General Motors addressed this comment by releasing the statement: “Yes, but would you want your car to crash twice a day?”

I was abruptly put in mind of this old joke when reading the latest news about Toyota’s crash-prone cars, because some of the fatal problems now appear to be based not in the mechanics of the cars, but in their software. The New York Times today reported on the story of 77-year-old Guadalupe Alberto, who died when her 2005 Camry accelerated out of control and crashed into a tree; “the crash is now being looked at as a possible example of problems with the electronic system that controls the throttle and engine speed in Toyotas.”

The point is, cars have gradually employed more and more software as control systems, to the point where, as the Times notes …

The electronic systems in modern cars and trucks — under new scrutiny as regulators continue to raise concerns about Toyota vehicles — are packed with up to 100 million lines of computer code, more than in some jet fighters.

“It would be easy to say the modern car is a computer on wheels, but it’s more like 30 or more computers on wheels,” said Bruce Emaus, the chairman of SAE International’s embedded software standards committee.

Maybe we shouldn’t be surprised if Toyota winds up wrestling with bug-caused crashes. Once software grows really huge, its creators are often unable to vouchsafe that it’s bug-free — that it’ll work as intended in all situations. Automakers have a vested and capitalistic interest in making sure their cars don’t crash, so I’m sure they’re pretty careful. But it’s practically a law of nature that when code gets huge, bugs multiply; the software becomes such a sprawling ecosystem that no single person can ever visualize how it works and what might go wrong. Worse, it’s even harder to guarantee a system’s beahvior when it’s in the hands of millions of users, all behaving in idiosyncratic ways. They are the infinite monkeys bashing at the keyboard, and if there’s a bug in there somewhere, they’ll uncover it — and, if they do so while travelling 50 miles an hour, possibly kill themselves.

The problems of automobile software remind me of the problems I saw two years ago while writing about voting-machine software for the New York Times Magazine. As with cars, you’ve got software that is performing mission-critical work — executing democracy! — and it’s in the hands of millions of users doing all sorts of weird, unanticipated stuff (like double- or triple-touching touchscreens that are only designed for single-touch). Let’s leave aside the heated question of whether a manufacturer or hacker could throw an election by tampering with the software. The point is, even without recourse to that sort of skulduggery, what I found is that the machines so frequently crash, bug out, or just do head-scratchingly weird stuff that it’s no wonder so many people refuse to trust them.

So what’s the solution? Well, in the world of election software, many have suggested open-sourcing the code. If thousands of programmers worldwide could scrutinize voting-machine software, they’d find more bugs than the small number of programmers currently working in trade secret. And theoretically this could improve public confidence.

Would the same process improve automobile code? Should the software in our cars be open-sourced?

You may have heard about You Are Not A Gadget: A Manifesto, the new book by Jaron Lanier, the inventor of virtual reality. It’s been getting quite a lot of praise; in the New York Times, Michiko Kakutani called it “lucid, powerful and persuasive”, and the New Yorker suggested that “his argument will make intuitive sense.”

I was recently assigned to review the book by Bookforum, and that review is now on the newsstands. It’s an omnibus analysis of three books, loosely bungee-corded together by their common concern for how the Internet is changing the way we think, write and communicate. I had been looking forward to Lanier’s book in part because I really enjoyed his provocative 2006 essay “Digital Maoism”, and more importantly because I’ve interviewed him once or twice and thought he was an incredibly thoughtful guy. (What’s more, he’s a musician, and I often find I like the way musicians think.)

Unfortunately, You Are Not A Gadget is pretty dreadful. It has flashes of absolute genius, but is ruined by a flaw common to most woe-is-the-digital-age books: They begin by claiming the Internet is filled chiefly with either completely idiotic culture or nasty, meanspirited, anonymous commentary — but they offer almost no evidence to document this. So you spend the entire book listening to Lanier explain why the Internet is such a dreadful place, without ever being convinced that it actually is. (I am currently sketching out an upcoming blog entry where I rant about this literary trend at numbing length.)

In contrast, the other two books I reviewed were written by academics who wondered about the impact of the Internet on society, but who actually decided to collect some data on it; not coincidentally, they came up with conclusions considerably less apocalyptic, and considerably more nuanced, than Lanier.

Research! It’s not just for breakfast any more.

Anyway, the review is online here for free at the Bookforum site, and a copy is archived below!

Floating Signifiers

The Internet hasn’t killed the English language — yet

by Clive Thompson

In the late 1870s, the advent of the telephone created a curious social question: What was the proper way to greet someone at the beginning of a call?

The first telephones were always “on” and connected pairwise to each other, so you didn’t need to dial a number to attract the attention of the person on the other end; you just picked up the handset and shouted something into it. But what?

Alexander Graham Bell argued that “Ahoy!” was best, since it had traditionally been used for hailing ships. But Thomas Edison, who was creating a competing telephone system for Western Union, proposed a different greeting: “Hello!,” a variation on “Halloo!,” a holler historically used to summon hounds during a hunt. As we know, Edison — aided by the hefty marketing budget of Western Union — won that battle, and hello became the routine way to begin a phone conversation.

Yet here’s the thing: For decades, hello was enormously controversial. That’s because prephone guardians of correct usage regarded it (and halloo) as vulgar. These late-nineteenth-century Emily Posts urged people not to use the word, and the dispute carried on until the 1940s. By the ’60s and ’70s, though, hello was fully domesticated, and people moved on to even more scandalously casual phrasings like hi and hey. Today, hello can actually sound slightly formal.

Life isn’t easy for the “scaly-foot gastropod”. This humble snail lives in hydrothermal vent fields two miles deep in the Indian ocean, and is surrounded by vicious predators. For example, there’s the “cone snail”, which stabs at its victims with a harpoon-style tooth as a precursor to injecting them with paralyzing venom. Then there’s the Brachyuran crab, which has been known to squeeze its prey for three days in an attempt to kill it. Yowsa.

Ah, but the scaly-foot gastropod has its own tricks. To fight back, it long ago evolved a particularly cool defense structure: It takes the grains of iron sulfide floating in the water around it and incorporates it into the outer layer of its shell. It it thus an “iron-plated snail”.

Oh yes way. Scientists discovered Crysomallon squamiferum in 1999, but they didn’t know a whole lot about the properties of its shell until this month, when a team led by MIT scientists decided to study it carefully. The team did a pile of spectroscopic and microscopic measurements of the shell, poked at it with a nanoindentor, and built a computer model of its properties to simulate how well it would hold up under various predator attacks.

The upshot, as they write in their paper (PDF here), is that the shell is “unlike any other known natural or synthetic engineered armor.” Part of its ability to resist damage seems to be the way the shell deforms when it’s struck: It produces cracks that dissipate the force of the blow, and nanoparticles that injure whatever is attacking it:

Within the indent region, consolidation of the granular structure is observed within and around the indent. Localized microfractures exhibit tortuous, branched, and noncontinuous pathways, as well as jagged crack fronts resulting from separation of granules, all of which are beneficial for energy dissipation and preventing catastrophic brittle fracture. Such microfracture modes may serve as a sacrificial mechanism. Upon indentation, inelastic deformation will be localized in the softer organic material between the granule interfaces, which allows for intergranular displacement and friction while simultaneously being compressed down into the softer ML. Shear of iron sulfide nanoparticles against the indenter surface is expected, in particular since penetrating attacks take place off-angle rather than directly on top of the shell apex, and can be facilitated by intergranular displacements during yielding of the OL. This provides a potential grinding abrasion and wear mechanism to deform and blunt the indenter (since biological penetrating threats are in reality deformable as well) that will continue throughout the entire indentation process.

Beyond awesome. This is Darwinian evolution mixed with, like, Burning Man.

Being scientists of biomimicry, the authors surmise that if it were possible to reverse-engineer the entire shell — it’s not just the outer iron layer that’s cool; there are also two inner layers with gooey nougat that are equally important in defending the snail — they could produce superstrong materials for military defense and “load-bearing”.

Fair enough. But personally I’m satisfied just to have more pure science that proves, yet again, the inexhaustible Weirdness Of The Briny Deep.

Iron snails, people! Iron snails.

(Thanks to the Eco Tone blog for alerting me to this one!)

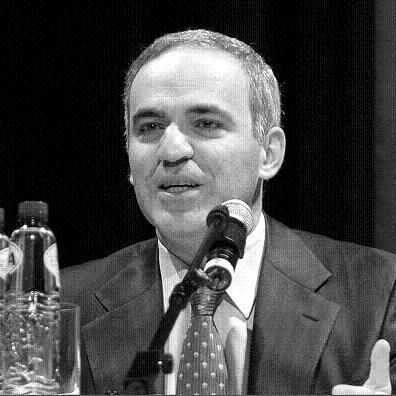

Back in 1997, chess grandmaster Garry Kasparov played against the IBM supercomputer Deep Blue, and lost. At the time it was widely regarded as a huge victory for artificial intelligence. But as Kasparov points out — in a fantastic new essay about computer chess in the New York Review of Books — experts had long predicted that a computer would eventually beat a human at chess.

That’s because chess software doesn’t need to analyze the game the way a human does. It just needs to do a “brute force” attack: It calculates all the possible games several moves out, finds the one that’s most advantageous to itself, and makes that play. Human grandmasters don’t work that way. They do not necessarily “see” the game several moves out. Indeed, they can’t — as Kasparov points out, chess is so complex that “a player looking eight moves ahead [faces] as many possible games as there are stars in the galaxy.”

Instead, people who are truly great at chess use the peculiarly human qualities of understanding, insight and intuition: They study oodles of games, encode that knowledge deeply in their brains, and practice incessantly. “As for how many moves ahead a grandmaster sees,” as Kasparov concludes, the real answer is: “Just one, the best one.” We like to think that artificial intelligence is replicating human smarts, but in reality it does something quite different. One doesn’t learn much about human intelligence by examining the way computers play chess, just as one doesn’t learn much about computer intelligence by examining the way humans play chess. They are fundamentally dissimilar processes.

But this gave Kasparov a fascinating idea. What if, instead of playing against one another, a computer and a human played together — as part of a team? If humans and computers think in very different ways, perhaps they’d be complementary. So in 1998 Kasparov put together an event called “Advanced Chess”, where humans played against one another, but each was allowed to also use a PC with the best available chess software. The chess players would enter positions into the machine, see what the computer thought was a good move, and use this to inform their own human analysis of the board. Cyborg chess!

The results? As Kasparov writes:

Lured by the substantial prize money, several groups of strong grandmasters working with several computers at the same time entered the competition. At first, the results seemed predictable. The teams of human plus machine dominated even the strongest computers. The chess machine Hydra, which is a chess-specific supercomputer like Deep Blue, was no match for a strong human player using a relatively weak laptop. Human strategic guidance combined with the tactical acuity of a computer was overwhelming.

The surprise came at the conclusion of the event. The winner was revealed to be not a grandmaster with a state-of-the-art PC but a pair of amateur American chess players using three computers at the same time. Their skill at manipulating and “coaching” their computers to look very deeply into positions effectively counteracted the superior chess understanding of their grandmaster opponents and the greater computational power of other participants. Weak human + machine + better process was superior to a strong computer alone and, more remarkably, superior to a strong human + machine + inferior process.

This stuff really fascinates me, because so much of our everyday lives now transpire in precisely this fashion: We work as cyborgs, using machine intelligence to augment our human smarts. Google amplifies our ability to find information, or even to remember it (I often use it to resolve “tip of the tongue” moments — i.e. to locate the name of a person or concept I know but can’t quite put my finger on). Social-networking software gives us an ESP-level awareness of what’s going on in the lives of people we care about. Tools like Mint help us spot invisible patterns in how we’re spending, or blowing, our hard-earned cash. None of these tools replace human intelligence, or even work the way that human intelligence works. Indeed, they’re often cognitively quite alien processes — which is precisely why they can be so unsettling to some people, and why we’re still sort of figuring out how, and when, to use them. The arguments that currently rage about the social impact of Facebook and Google are, in a sense, arguments about what sort of cyborgs we want — or don’t want — to be.

What I love about Kasparov’s algorithm — “Weak human + machine + better process was superior to a strong computer alone and … superior to a strong human + machine + inferior process” — is that it suggests serious rewards accrue to those who figure out the best way to use thought-enhancing software. (Or rather, those who figure out a way that’s best for them; people always use tools in slightly different, idiosyncratic ways.) The process matters as much as the software itself. How often do you check it? When do you trust the help it’s offering, and when do you ignore it?

(That photo above is by Elke Wetzig, and released for use under the GNU Free Documentation License!)

I'm Clive Thompson, the author of Smarter Than You Think: How Technology is Changing Our Minds for the Better (Penguin Press). You can order the book now at Amazon, Barnes and Noble, Powells, Indiebound, or through your local bookstore! I'm also a contributing writer for the New York Times Magazine and a columnist for Wired magazine. Email is here or ping me via the antiquated form of AOL IM (pomeranian99).

ECHO

Erik Weissengruber

Vespaboy

Terri Senft

Tom Igoe

El Rey Del Art

Morgan Noel

Maura Johnston

Cori Eckert

Heather Gold

Andrew Hearst

Chris Allbritton

Bret Dawson

Michele Tepper

Sharyn November

Gail Jaitin

Barnaby Marshall

Frankly, I'd Rather Not

The Shifted Librarian

Ryan Bigge

Nick Denton

Howard Sherman's Nuggets

Serial Deviant

Ellen McDermott

Jeff Liu

Marc Kelsey

Chris Shieh

Iron Monkey

Diversions

Rob Toole

Donut Rock City

Ross Judson

Idle Words

J-Walk Blog

The Antic Muse

Tribblescape

Little Things

Jeff Heer

Abstract Dynamics

Snark Market

Plastic Bag

Sensory Impact

Incoming Signals

MemeFirst

MemoryCard

Majikthise

Ludonauts

Boing Boing

Slashdot

Atrios

Smart Mobs

Plastic

Ludology.org

The Feature

Gizmodo

game girl

Mindjack

Techdirt Wireless News

Corante Gaming blog

Corante Social Software blog

ECHO

SciTech Daily

Arts and Letters Daily

Textually.org

BlogPulse

Robots.net

Alan Reiter's Wireless Data Weblog

Brad DeLong

Viral Marketing Blog

Gameblogs

Slashdot Games