« PREVIOUS ENTRY

In Praise of Obscurity: My latest Wired column

NEXT ENTRY »

Molecular secrets of the “iron-plated snail”

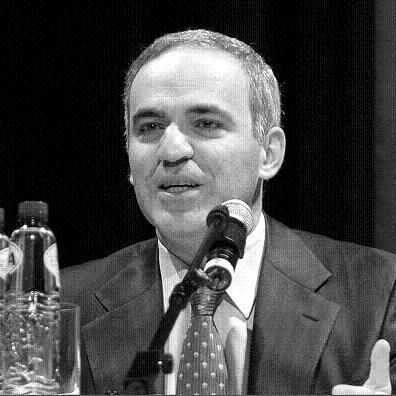

Back in 1997, chess grandmaster Garry Kasparov played against the IBM supercomputer Deep Blue, and lost. At the time it was widely regarded as a huge victory for artificial intelligence. But as Kasparov points out — in a fantastic new essay about computer chess in the New York Review of Books — experts had long predicted that a computer would eventually beat a human at chess.

That’s because chess software doesn’t need to analyze the game the way a human does. It just needs to do a “brute force” attack: It calculates all the possible games several moves out, finds the one that’s most advantageous to itself, and makes that play. Human grandmasters don’t work that way. They do not necessarily “see” the game several moves out. Indeed, they can’t — as Kasparov points out, chess is so complex that “a player looking eight moves ahead [faces] as many possible games as there are stars in the galaxy.”

Instead, people who are truly great at chess use the peculiarly human qualities of understanding, insight and intuition: They study oodles of games, encode that knowledge deeply in their brains, and practice incessantly. “As for how many moves ahead a grandmaster sees,” as Kasparov concludes, the real answer is: “Just one, the best one.” We like to think that artificial intelligence is replicating human smarts, but in reality it does something quite different. One doesn’t learn much about human intelligence by examining the way computers play chess, just as one doesn’t learn much about computer intelligence by examining the way humans play chess. They are fundamentally dissimilar processes.

But this gave Kasparov a fascinating idea. What if, instead of playing against one another, a computer and a human played together — as part of a team? If humans and computers think in very different ways, perhaps they’d be complementary. So in 1998 Kasparov put together an event called “Advanced Chess”, where humans played against one another, but each was allowed to also use a PC with the best available chess software. The chess players would enter positions into the machine, see what the computer thought was a good move, and use this to inform their own human analysis of the board. Cyborg chess!

The results? As Kasparov writes:

Lured by the substantial prize money, several groups of strong grandmasters working with several computers at the same time entered the competition. At first, the results seemed predictable. The teams of human plus machine dominated even the strongest computers. The chess machine Hydra, which is a chess-specific supercomputer like Deep Blue, was no match for a strong human player using a relatively weak laptop. Human strategic guidance combined with the tactical acuity of a computer was overwhelming.

The surprise came at the conclusion of the event. The winner was revealed to be not a grandmaster with a state-of-the-art PC but a pair of amateur American chess players using three computers at the same time. Their skill at manipulating and “coaching” their computers to look very deeply into positions effectively counteracted the superior chess understanding of their grandmaster opponents and the greater computational power of other participants. Weak human + machine + better process was superior to a strong computer alone and, more remarkably, superior to a strong human + machine + inferior process.

This stuff really fascinates me, because so much of our everyday lives now transpire in precisely this fashion: We work as cyborgs, using machine intelligence to augment our human smarts. Google amplifies our ability to find information, or even to remember it (I often use it to resolve “tip of the tongue” moments — i.e. to locate the name of a person or concept I know but can’t quite put my finger on). Social-networking software gives us an ESP-level awareness of what’s going on in the lives of people we care about. Tools like Mint help us spot invisible patterns in how we’re spending, or blowing, our hard-earned cash. None of these tools replace human intelligence, or even work the way that human intelligence works. Indeed, they’re often cognitively quite alien processes — which is precisely why they can be so unsettling to some people, and why we’re still sort of figuring out how, and when, to use them. The arguments that currently rage about the social impact of Facebook and Google are, in a sense, arguments about what sort of cyborgs we want — or don’t want — to be.

What I love about Kasparov’s algorithm — “Weak human + machine + better process was superior to a strong computer alone and … superior to a strong human + machine + inferior process” — is that it suggests serious rewards accrue to those who figure out the best way to use thought-enhancing software. (Or rather, those who figure out a way that’s best for them; people always use tools in slightly different, idiosyncratic ways.) The process matters as much as the software itself. How often do you check it? When do you trust the help it’s offering, and when do you ignore it?

(That photo above is by Elke Wetzig, and released for use under the GNU Free Documentation License!)

I'm Clive Thompson, the author of Smarter Than You Think: How Technology is Changing Our Minds for the Better (Penguin Press). You can order the book now at Amazon, Barnes and Noble, Powells, Indiebound, or through your local bookstore! I'm also a contributing writer for the New York Times Magazine and a columnist for Wired magazine. Email is here or ping me via the antiquated form of AOL IM (pomeranian99).

ECHO

Erik Weissengruber

Vespaboy

Terri Senft

Tom Igoe

El Rey Del Art

Morgan Noel

Maura Johnston

Cori Eckert

Heather Gold

Andrew Hearst

Chris Allbritton

Bret Dawson

Michele Tepper

Sharyn November

Gail Jaitin

Barnaby Marshall

Frankly, I'd Rather Not

The Shifted Librarian

Ryan Bigge

Nick Denton

Howard Sherman's Nuggets

Serial Deviant

Ellen McDermott

Jeff Liu

Marc Kelsey

Chris Shieh

Iron Monkey

Diversions

Rob Toole

Donut Rock City

Ross Judson

Idle Words

J-Walk Blog

The Antic Muse

Tribblescape

Little Things

Jeff Heer

Abstract Dynamics

Snark Market

Plastic Bag

Sensory Impact

Incoming Signals

MemeFirst

MemoryCard

Majikthise

Ludonauts

Boing Boing

Slashdot

Atrios

Smart Mobs

Plastic

Ludology.org

The Feature

Gizmodo

game girl

Mindjack

Techdirt Wireless News

Corante Gaming blog

Corante Social Software blog

ECHO

SciTech Daily

Arts and Letters Daily

Textually.org

BlogPulse

Robots.net

Alan Reiter's Wireless Data Weblog

Brad DeLong

Viral Marketing Blog

Gameblogs

Slashdot Games