« PREVIOUS ENTRY

@cavafy probably wouldn’t be on Twitter

In October of 1962, Douglas Englebart imagined a remarkable new technology for writing. Englebart is a serial visionary; among other things, he’s famous for having invented the computer mouse. But in his essay “Augmenting Human Intellect”, he envisioned a typing machine that was also equipped with a special sensing stylus. It would work like this: You’d type away at the machine, composing notes or a raw draft of a piece. But after you’d written a bunch of stuff, you could sit back, read it over, and if you found a passage you wanted to clip out and reproduce, you could just wave the stylus over the words and — presto — they’d be re-typed by your machine.

He was, in essence, imagining a machine that could electronically cut and paste.

Englebart suspected cut-and-paste would have an enormous impact on the way we’d write. As he predicted:

This writing machine would permit you to use a new process of composing text. For instance, trial drafts could rapidly be composed from re-arranged excerpts of old drafts, together with new words or passages which you stop to type in. Your first draft could represent a free outpouring of thoughts in any order, with the inspection of foregoing thoughts continuously stimulating new considerations and ideas to be entered. If the tangle of thoughts represented by the draft became too complex, you would compile a reordered draft quickly. It would be practical for you to accommodate more complexity in the trails of thought you might build in search of the path that suits your needs.

Pretty amazing foresight, eh? He wrote that 50 years ago — when computers were still room-sized industrial tools — yet he nailed it: One of the biggest impacts of word processing has been the way it makes cutting and pasting a central part of how we organize our thoughts.

The funny thing is, cutting and pasting is now so routine that we often forget how strange it felt at first. I’m 42, old enough that I wrote my high-school essays — and even the essays of my first year of college — longhand on paper, then typed them up on a typewriter. The work of arranging and redacting my thoughts was done with a pencil and paper; the typewriter existed mostly just as a way to produce a good-looking final draft, though I’d occasionally buff or improve a sentence as I was typing it up. (Though I wouldn’t edit too much; if my attempts to tweak the sentence while typing made things worse, I’d have to laboriously white out my screwed-up text with Liquid Paper, a substance beyond foul.)

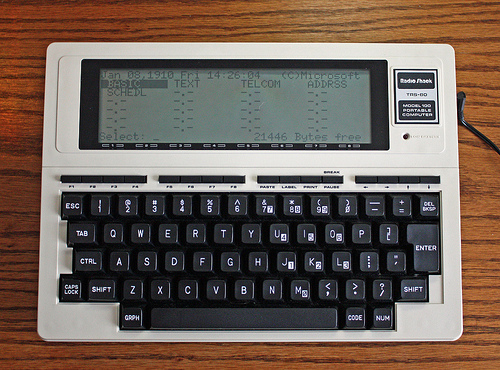

When I first got my hands on a word processor, it felt absolutely uncanny: The words! They’re … they’re moving around! THEY LOOK LIKE PRINTED WORDS BUT THEY’RE MOVING AROUND. But pretty quickly I grasped the new style of composition that was possible, and I loved it. Precisely as Englebart envisioned, I could write longer, more discursive drafts, letting my thoughts wander into ever-more-creative-or-weirder nooks, and taking arguments to their logical endpoint just to see where they’d lead. I could give myself mental permission to do this because it was easy to redact the best parts into my final essay. Robert Frost talked about how he couldn’t tell what a poem was going to be about until he’d finished writing it. That’s what word processors did to my academic and journalistic writing: As the mechanical act of writing became easier, it became easier to write prodigiously as a way of sussing out my own thoughts.

It’s hard to remember now, but many people back in the 80s totally freaked out about word processing. I recall professors worrying that it would make students write more sloppily, and even think more sloppily. The fluidity of cutting and pasting seemed intellectually suspicious. I even remember one of my TAs arguing — in a lovely foreshadowing of today’s fears that “the Internet is making us stupid” — that cutting and pasting would render our generation unable to craft a coherent argument, because the sheer slipperiness of digital prose, its slithy rearrangeability, would render our ideas and prose rootless, nonsequential, and flighty.

Mind you, they weren’t entirely wrong. Cut-and-paste poses cognitive risks that plague me even today. Sometimes when I’m working on a story, I’ll cut and paste so many bloated passages from white papers and interviews into my “research file” that it eventually metasasizes to the length of Infinite Jest, and becomes completely useless. (Fascinatingly, some scientific studies (PFD link) have found that the most high-performing students resist this impulse: During research, they’re more judicious in their use of cut-and-paste than their lower-performing peers.) And I also find there are times when I need to step away from word processing. When I’m blocked on a piece of writing — particularly when I need to do big-picture structural thinking about the shape of a long article — I often reach for a pencil and huge piece of paper, so I can diagram the flow. (And hey: There are word-processing holdouts even more hard-core, like the excellent sci-fi novelist Joe Halderman, who writes not only exclusively in longhand but by the light of oil lamps.)

It’s an interesting question either way. How has the word processor changed the way we think? How has it changed the way you think?

I'm Clive Thompson, the author of Smarter Than You Think: How Technology is Changing Our Minds for the Better (Penguin Press). You can order the book now at Amazon, Barnes and Noble, Powells, Indiebound, or through your local bookstore! I'm also a contributing writer for the New York Times Magazine and a columnist for Wired magazine. Email is here or ping me via the antiquated form of AOL IM (pomeranian99).

ECHO

Erik Weissengruber

Vespaboy

Terri Senft

Tom Igoe

El Rey Del Art

Morgan Noel

Maura Johnston

Cori Eckert

Heather Gold

Andrew Hearst

Chris Allbritton

Bret Dawson

Michele Tepper

Sharyn November

Gail Jaitin

Barnaby Marshall

Frankly, I'd Rather Not

The Shifted Librarian

Ryan Bigge

Nick Denton

Howard Sherman's Nuggets

Serial Deviant

Ellen McDermott

Jeff Liu

Marc Kelsey

Chris Shieh

Iron Monkey

Diversions

Rob Toole

Donut Rock City

Ross Judson

Idle Words

J-Walk Blog

The Antic Muse

Tribblescape

Little Things

Jeff Heer

Abstract Dynamics

Snark Market

Plastic Bag

Sensory Impact

Incoming Signals

MemeFirst

MemoryCard

Majikthise

Ludonauts

Boing Boing

Slashdot

Atrios

Smart Mobs

Plastic

Ludology.org

The Feature

Gizmodo

game girl

Mindjack

Techdirt Wireless News

Corante Gaming blog

Corante Social Software blog

ECHO

SciTech Daily

Arts and Letters Daily

Textually.org

BlogPulse

Robots.net

Alan Reiter's Wireless Data Weblog

Brad DeLong

Viral Marketing Blog

Gameblogs

Slashdot Games